Quiet Solidarity

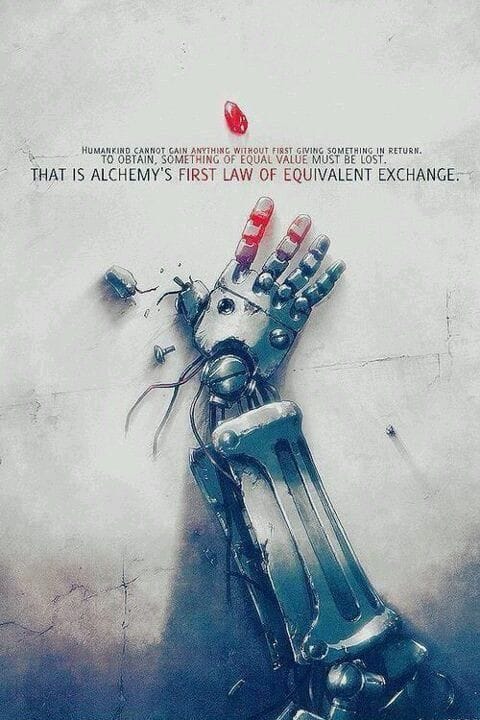

I've been re-watching Fullmetal Alchemist: Brotherhood on Netflix, not for the first time, probably not for the last, because there's something specific about returning to a story whose ending you already know when the actual world has stopped offering legible ones. The nervous system gets to practice resolution without the cost of uncertainty. Ed gets his arm back. Things work out. I know this going in, and I watch anyway (Hiromu Arakawa understood systems before systems thinking was a LinkedIn category), and on Monday morning I close the laptop and get on a call.

Every week lately, somewhere in the first two minutes, someone makes a joke. The sideways kind, aimed at something large and unnameable, timed so precisely it could only come from someone who'd been carrying the same thing as everyone else in the room and just decided to say so obliquely. The laugh that follows is recognizable once you've heard it a few times: it has more relief in it than amusement. And then the first agenda item appears and the meeting is just a meeting again.

What it is, underneath the joke and the exhale and the pivot back to business, is an acknowledgment distributed across a room full of people who mostly didn't sign up to do that for each other but do it anyway: I see that you're carrying something, I'm carrying it too, we don't have time to put it down so let's just say so, briefly, together, and then get back to work. That's the whole transaction. It takes ninety seconds. It's also, in the current climate, load-bearing in a way that's easy to undercount because it doesn't appear anywhere on the agenda.

I've graduated into recessions, worked through a pandemic, watched whole market structures reorganize around technologies that didn't exist the year before. The teams that held together across those periods shared something specific, a feel for when the weight needed naming and how to do it without making the weight the whole meeting, and it usually came from someone, usually not the most senior person in the room (never the most senior person in the room), who'd developed that instinct quietly over time.

What's different now is that the stressors aren't taking turns. AI disruption and geopolitical instability are running simultaneously, which produces a sustained ambient load with no obvious resolution timeline rather than one hard thing that eventually ends. The brain handles these differently. Acute threat produces a kind of clarity, resources narrow to the immediate problem, action becomes possible. Ambient threat is more corrosive: the prefrontal cortex starts deprioritizing executive function under sustained threat signals, and the cognitive capacity required for nuanced judgment, careful social reading, and emotional regulation erodes precisely when teams need it most.

Some thinking is fast and automatic, pattern-matching that runs without effort, the kind that gets you through a commute or a familiar task without taxing anything. Other thinking is slow and deliberate, the kind that costs something and knows it costs something. Reading a room accurately, timing an intervention, absorbing someone's distress and converting it into something the team can function inside. Daniel Kahneman named these System 1 and System 2. Chronic stress depletes System 2 first, which means the most socially and organizationally expensive work, the work that holds teams together, runs on the smallest remaining budget.

Steven Shaw and Gideon Nave at Wharton spent 2025 running experiments on what happens when people reason alongside generative AI under cognitive load, and what they found was a pattern they called cognitive surrender: the tendency to adopt AI outputs with minimal scrutiny, bypassing both intuition and deliberation. Participants chose to consult AI on more than half of all trials even when doing so made them less accurate. The conditions that reliably produced it were stress, depletion, and the availability of something faster than thinking. They named this System 3 in their framework, artificial cognition operating outside the brain, capable of supplementing or supplanting internal reasoning depending on how much internal reasoning the person still had left to give.

The joke at the top of the meeting is a refusal of that path. Someone in that room, under the same conditions that reliably produce surrender in Shaw and Nave's experiments, chose to read the room instead of offloading to it. Chose to time something, to absorb the weight of the moment and convert it into a form the group could receive. That's System 2 running in a context specifically designed to exhaust it, and it's running voluntarily, without a line item on the agenda, without anyone asking.

What cognitive surrender looks like in a team is quiet. The judgment call that gets skipped. The follow-up that doesn't happen because the AI drafted a summary and everyone moved on. The pause before someone answers that nobody registered because the meeting was already running behind. The slow, costly, human attention that drops out first when the load gets heavy and the faster alternative is right there. The joke is evidence that someone in that room is still paying it.

Hiromu Arakawa built an entire cosmology around what happens when the ledger goes unexamined.

The foundational law of alchemy in the series is equivalent exchange: to obtain something, something of equal value must be given in return. Ed and Al spend the entire story paying a debt incurred trying to bring back someone already lost, and somewhere in the middle of paying it they start questioning whether the law that extracted payment is actually just or whether it's simply a law, which turns out to be a meaningful distinction. A law can be technically satisfied while its spirit is violated entirely.

Equivalent exchange as a natural property of systems is different from equivalent exchange as ethics. In thermodynamics it's conservation: what gets taken out has to return or the system loses integrity. Forests work this way. Nutrients cycle or the soil depletes. The principle holds in organizations and families too, whether anyone's tracking it or not.

The two minutes at the top of the standup are an exchange. Someone offers the joke, the room offers the laugh back, the weight gets distributed briefly across everyone present rather than sitting on one person, and people can work. That circuit closing is what makes the next hour possible. Where it breaks down is when the joke is the entirety of the exchange, when the acknowledgment gets filed away and the meeting moves forward and nobody ever asks the person who made the joke how they're doing. The weight gets named and then handed back unshared. The release valve releases into nothing, and the cost, which is real, accumulates in a person rather than a ledger.

The person who makes the joke is usually also the one reading the room for the rest of the meeting, noticing when someone's camera stays off longer than usual, following up afterward, tracking the humans running the work alongside the work itself. The role has no title. It tends to fall to people with enough emotional range to hold weight without visibly buckling, which also means the people in it are among the last anyone thinks to check on (and the first to absorb it when the system starts collecting).

Cognitive surrender erodes this work first, quietly, in a way that doesn't register until something is already missing. AI can draft the agenda and summarize the notes and generate the follow-up, but it can't notice that the joke landed differently this week, or register that the person who usually holds the room has gone quieter than usual. That's irreducibly System 2, slow and costly, and it's what humans stop doing first when the tools make stopping easy.

The Tucker episodes of Brotherhood are about this, though the show frames it as horror rather than organizational dynamics (fair framing, honestly). Shou Tucker is a State Alchemist facing recertification, his research stalled, the stakes of failure not abstract. So he produces a result: he transmutes his daughter and the dog into a chimera that can speak, presents it as a breakthrough, passes his review. The horror is in the followable logic. The system required something. He provided it. Equivalent exchange, technically complete, the ledger balanced, nothing recorded about what the output actually cost or who paid.

Ed breaks down when he understands what he's looking at, because the law he'd organized his whole understanding around had just been used to justify something irreversible, and the system had accepted it without complaint: the accounting finished, the cost remaining in the one place the ledger never looked.

Teams extract from their load-bearing members the same way, just more slowly and with better plausible deniability. Results required, results produced, ledger updated, expenditure unrecorded.

Watching Brotherhood on a weekend is, in Shaw and Nave's terms, sanctioned cognitive surrender: the ending is known, the debt is someone else's, the alchemy works out, and System 2 gets to rest inside a story that already resolved.

Monday asks for something different. The meeting starts, the joke gets made, the room exhales, and somewhere in there someone is doing the slow expensive work of actually paying attention to the humans around them, completing an exchange the agenda has no line item for. What shifts that work from functional to generative is whether anyone closes the loop past the exhale: whether the person who made the joke gets asked, at some point, how they're actually doing, whether the acknowledgment lands somewhere instead of dissipating back into the morning.

References

Arakawa, H. (2001–2010). Fullmetal Alchemist [Manga]. Square Enix. Adapted as Fullmetal Alchemist: Brotherhood [Anime series], Bones Studio, 2009–2010. Available on Netflix.

Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux.

Shaw, S. D., & Nave, G. (2026). Thinking fast, slow, and artificial: How AI is reshaping human reasoning and the rise of cognitive surrender. Wharton School Research Paper. https://osf.io/preprints/psyarxiv/yk25n_v1