One Dock, With Coordinates

On what happens when you hand a language model a blank canvas and ask it to solve a geometric problem it was never built to solve.

I took the week off of writing for my birthday. In practice, this meant a lot of time in the garage, daydreaming about raised beds and trellises, and time for research!

We've been accumulating materials for a duck loafing dock since fall. Planks of varying lengths, PVC pipe sections (some glued into configurations, others still loose and suggestive), foam cutouts whose shapes only make sense if you already know the plan (usually buried somewhere in our shared Obsidian brain or my partner's head). Every time I walked through the garage this week, something sparked. My partner would pick up a pipe section and gesture at the pond and I'd nod, already picturing a different dock than the one he was describing.

Verbal description leaves the listener with a vague outline, gray and fuzzy concepts the brain attempts to make sense of. "A ramp leading down to the water from the platform's edge" works for at least 4 valid dock geometries. My partner and I would use the same words (ramp, platform, entry point) and be building entirely different structures in parallel. Language keeps all interpretations open. It's generous that way, and useless.

He walked me through a few of his musings, rendered through Claude. I knew better where he was headed. So, I took my pen to paper for my idea and sketched - the design collapsed from potential into specific. One ramp angle. One entry point. One set of spatial relationships that could finally be questioned, challenged, revised from a fixed starting point rather than imagined separately by everyone in the room.

Physicists have a name for this. A quantum system in superposition holds all possible states simultaneously, each with some probability, until observation forces it into one. The act of measurement collapses the wave function (the Copenhagen interpretation is contested and only one of several theories in this space). Before the sketch, every version of the dock ran in parallel, all of them possible. After it: one dock, with coordinates, arguable and buildable.

Then I took my version of the dock to Claude, too.

The prompt was roughly this. Here's a hand-drawn schematic of a duck loafing dock, pond's north edge at the top, ramp entering from the south. Render it as an SVG with accurate proportions and labeled components.

V1 came back with north drifted to the right edge of the diagram. The ramp (drawn in the sketch as a gradual slope) was rendered at an angle that would require the ducks to mountaineer their way up. One PVC section floated, connected to nothing structural. I also learned, somewhere in all this research, that ducklings are so densely fluffed they can fall stories from a nest and land on grass without a scratch. Which explains a confidence level in their locomotion that I now find slightly more reasonable for the AI-generated designs.

V2 corrected the compass orientation but introduced proportion drift. The platform shrank. The PVC planters somehow on the very edge of the dock.

V3, below, is close enough to be useful. Still wrong in a few places. Curiosity wouldn't let this sit idly with me, so off I went to figure out what was happening under the hood.

Language models generate SVG the same way they generate everything else: one token at a time, left to right, no spatial state maintained between them. When the model writes <x="130"> for the dock platform and then <x="240"> for the ramp attachment 800 tokens later, it's pattern-matching on how SVG code typically looks in training data, not reasoning geometrically about the coordinate system it's building.

A human drawing this in Illustrator has the whole canvas in view. The model has only the tokens already written. It knows, statistically, that a label usually appears to the right of its anchor point by about this much, that a ramp usually terminates somewhere in the lower third of the frame. But "about this much" isn't a coordinate. The model is writing from memory rather than from sight, and memory doesn't track cumulative drift.

Ask the model what a ramp does. Connects higher surfaces to lower ones, labels belong near the things they name. It probably has a decent read on what ducks prefer in a loafing platform (flat, south-facing, ideally no 60-degree entry requirement). The translation to coordinates is where it approximates; "near" has to resolve to a specific x value, "gradual slope" has to become an angle in SVG path notation. Each semantic relationship gets converted into a precise numeric value from statistical priors, and those approximations compound. A small drift in the ramp's starting position becomes a large drift in its terminus. The label for "North" ends up closer to east because the model's sense of the canvas isn't tracking the actual canvas; it's tracking the tokens it's already produced.

One research tangent into maritime navigation history led me to learn that "dead reckoning" works the same way. Navigators without GPS estimate position from the last known fixed point (usually using the stars), plus speed and heading direction. It's reasonable over short distances, but accumulated error makes it unreliable on a long voyage.

Researchers have been measuring this problem systematically for a few years. (I went looking for more papers while writing this, mostly to check whether the dock iterations were just bad prompting on my part. They weren't.) A 2023 paper from ProtagoLabs benchmarked ChatGPT on plotting spatial points, 2D route planning, and 3D pathfinding, finding that performance degraded steadily as task complexity increased. A 2025 study probing spatial reasoning across five task types found an average accuracy loss of 42.7% as task complexity scaled, with some tasks reaching 84% degradation. Hayashi and Hirata, writing in March 2026, tried giving frontier models an external imagery module as a "cognitive prosthetic" and asked them to solve 3D rotation tasks. Best accuracy reached 62.5% even with the spatial burden offloaded, and their conclusion was that the limitation runs deeper than maintaining holistic state; current frontier models lack the foundational visual-spatial primitives required to interface with imagery at all.

The duck dock is a small instance of a documented, structural problem. What the model says it knows about space and what it can accurately produce consistently diverge. That divergence is architectural, showing up whether the task is plotting points, rotating shapes, or rendering a loafing dock schematic.

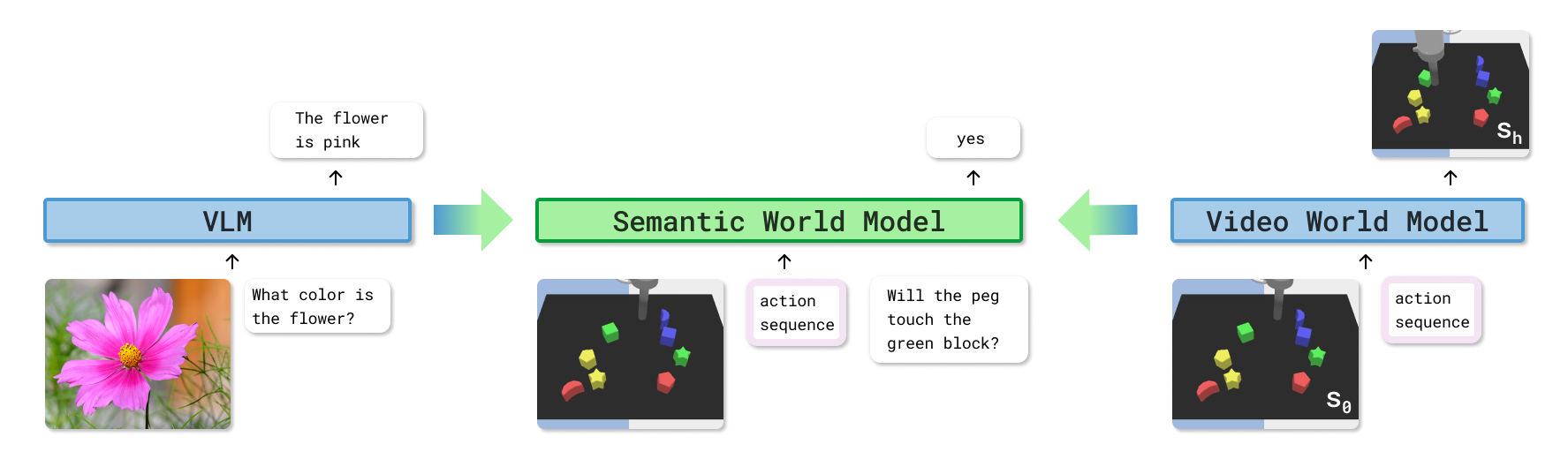

Chollet's ARC-AGI benchmark has been asking this question since 2019; frontier models consistently break on novel reasoning configurations, recognizing patterns from training rather than generalizing to new ones. While pulling threads for this article, I found LeCun making a version of the same argument at a much bigger scale; LLMs trained on text can describe spatial relationships but can't model them, and fixing it requires a different kind of system entirely. (The leading candidate for fixing this structurally, at least according to LeCun and the several billion dollars currently chasing his thesis, is world models — AI systems that learn representations of physical reality rather than predicting text. That's a longer conversation.)

It also occurred to me that an SVG is 2 languages coexisting in the same file; the content layer names and relates things (what the dock is called, what the ramp connects, what each element represents), while the geometric layer positions and scales them (x, y, width, height, transform). Both are written as text, therefore both are legible to the model.

However, there is an asymmetry. The content side is predictable - it draws on the same language knowledge the model uses everywhere. The geometric side approximates - spatial precision is an arithmetic problem, and arithmetic in a probabilistic system accumulates error the way dead reckoning does on a long voyage. Whether this shifts as the technology evolves is an open question. The architectural wrapper is the practical answer available today.

Figma has been navigating this asymmetry for years. Components carry semantic identity (names, variants, tokens), while auto-layout constraints and grid systems own the geometry. The architecture keeps those concerns distinct, even when the canvas holds both.

Given feedback, the model will evaluate coordinate inconsistencies, catch proportion mismatches, revise specific values. Generating the full coordinate system from scratch, reliably, on a blank canvas, is where it drifts.

Tools that handle spatial output well architect around the asymmetry rather than fighting it. Templates fix the coordinate system ahead of time while structured prompting narrows the space in which the model can approximate. That way, the model fills semantic content into a geometric container it didn't have to build. Labels, descriptions, relationships, intent.

The bonus is that because the model can read the geometric layer as text, it can still modify the template, respond to feedback about positioning, catch internal inconsistencies. The model can read both - generating both simultaneously from scratch is where the seam shows.

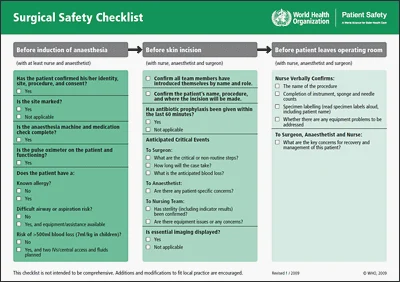

Microsoft named this pattern explicitly this week in their Legal Agent for Word; their redlining engine applies, in their words, "a deterministic resolution layer over the edits, instead of relying on an LLM to generate every revision directly," because legal workflows need precision and auditability.

The principle is old. Blueprints, building plans, surgical protocols, legal contract playbooks; every high-stakes domain that requires reproducibility has built some version of the same answer. Fix the coordinate system. Let the flexible system operate inside it. The observation collapses the superposition into something you can act on. What's new is mapping where the seam is when one of those systems is probabilistic by design.

If you're building with language models, locating the seam is the design work. That sounds abstract until you're staring at a loafing dock schematic where the ramp ends in open water. The seam runs between what the model does well and what it approximates. Asking a model to interpret what a design decision communicates, articulate the tradeoff between two approaches, or describe the relationship between spatial elements, all of that sits well inside the fluency zone. Asking it to generate the coordinate system those elements will live in, from scratch, with accuracy that compounds across hundreds of tokens, is where you want the template. The prompt that asks the model to do both at once is the prompt that produces the V1 version of the dock.

This holds beyond spatial tasks. Legal redlines need every tracked change to correctly reference the source clause. Code generation needs variable names to stay consistent across functions and files. Financial models need formulas to reference the right cells across sheets. In each case the underlying problem is the same; the model is generating output that requires internal precision over a long sequence, and long sequences are where statistical approximation compounds into drift.

The deterministic scaffold fixes the reference system so the model operates inside it rather than constructing it on the fly. The model's semantic output improves when it's not also managing geometric precision; the reasoning is cleaner, the labels more accurate, the described relationships more considered. That improvement is easy to miss when you're focused on why the ramp is floating.

Hayashi and Hirata name this kind of architecture a "cognitive prosthetic," an external system that handles a capability the primary system lacks. The architectural wrapper gives the model a spatial reference system to work inside rather than one it has to generate. Most production AI systems that work well in constrained domains are built this way, whether or not the teams who built them describe it in those terms.

The duck dock took 3 iterations because I handed the model a blank canvas and asked it to solve both problems simultaneously. The sketch came later that night, after the kids went to sleep; my partner and I were already in front of Claude, talking through homestead designs while he laid out PVC sections that weren't random (a first flush diverter for the rain barrels, each pipe chosen for a specific job). I drew it mid-conversation, and the design finally had one geometry. Next time, the prompt starts there.

References

- Microsoft Word Legal Agent announcement, Sumit Chauhan, Microsoft 365 Copilot Blog, April 30 2026

- Stuck in the Matrix: Probing Spatial Reasoning in Large Language Models, Bai et al., October 2025 https://arxiv.org/abs/2510.20198

- WHO Surgical Safety Checklist, World Health Organization / Ariadne Labs, 2009. Licensed for free use and adaptation with attribution. Available at who.int/teams/integrated-health-services/patient-safety/research/safe-surgery

- Inherent Limitations of GPT-4 Regarding Spatial Information, Yan et al., 2023 https://arxiv.org/abs/2312.03042

- Limits of Imagery Reasoning in Frontier LLM Models, Hayashi and Hirata, March 2026 https://arxiv.org/abs/2603.26779

- ARC-AGI benchmark, François Chollet

- Yann LeCun on world models and LLM spatial limitations