The Wait Is the Work

Last Sunday was supposed to be too late.

The plan had us planting saplings on Friday. Pear, apple, raspberry, blueberry, each one already mapped to a spot, a season, a role in something larger we're building. We'd timed it, accounted for it, built it into the week. Then the rain came and didn't stop, and Friday became Saturday became Sunday, and at some point my partner and I stopped arguing with the weather and just waited.

Sunday was sunny. The kids came out. Small hands in cold dirt, questions about why trees need holes, a general chaos that added forty minutes to a task we'd already mentally finished two days prior.

The saplings went in healthy. The kids have a memory now. We have photographic evidence that our children will, under the right conditions, help with things. None of that was in the Friday plan.

I've been thinking about what actually happened there, beyond the obvious. We didn't make a better decision. We got routed around our decision entirely. The weather closed the optimized path and something else emerged: unplanned participation, better conditions, an afternoon that mattered in a way a Friday wouldn't have.

This keeps surfacing for me in a design context, specifically in how we build AI products. The dominant instinct right now is to minimize the distance between question and answer, between need and response. Speed is treated as quality. Latency is a bug. The cursor blinks too long and we feel like something's broken.

What we've decided, as an industry, is that the pause is the problem to solve.

I think that's wrong. And I think it's quietly making us worse at the part of thinking that matters most.

Janelle Monáe has a song on Sesame Street called "The Power of Yet." It's about not having learned something, about the incompleteness of "I don't know" being a beginning rather than a verdict. It's a children's song in the best possible way: it says something true without hedging.

"Yet" is a design choice. It holds the space open instead of collapsing it.

Most AI products are built to eliminate "yet." Someone types a half-formed question and the system completes it before they've finished thinking. Someone sits with an ambiguous problem and the interface surfaces 3 options before they've named what's actually bothering them. The resolution arrives faster than the inquiry. That's not acceleration, it's substitution: the system's pattern recognition standing in for the user's actual thought.

The distinction matters because inquiry and response aren't the same cognitive activity. Formulating a good question requires sitting inside uncertainty for a moment, feeling the edges of what you don't know, tolerating the discomfort of not-yet. Systems that resolve that discomfort instantly aren't helping users think better. They're thinking adjacent to the user while the user watches.

Pierce Edmiston and I talked about this on The Fuse and The Flint podcast, in an episode about AI-assisted coding. He made an observation about how he actually navigates Stack Overflow: when he hits a problem, he doesn't start with the original question. He skips straight to the first answer.

The reason, he explained, is that the first answer is often responding to a better question than the one that was actually posted. The person answering intuited what the original poster was really trying to solve, set the literal question aside, and answered that version instead. The original poster may not have even known that reframe was happening. The answer just worked, for reasons they couldn't fully articulate.

What Stack Overflow built, through community norms rather than interface design, is a correction mechanism for premature closure. The reframe gets inserted by someone who cared more about solving the actual problem than answering the literal one, and who had enough experience to see the gap between the two. It didn't come from a feature. It came from accumulated human judgment about what good help actually looks like.

Product design can't easily replicate that. It can approximate it — some AI systems now ask clarifying questions before responding, which is a step. But there's a difference between a system prompted to ask a clarifying question and a community that organically developed the norm of doing so because the alternative produced worse outcomes. One is a feature. The other is a value, practiced into existence over time.

Most AI products are optimizing to eliminate the moment where someone says "wait, is that actually the right question." That moment is often the most valuable thing in the exchange. Tversky and Kahneman's foundational framing research established that how a problem is posed shapes not just the answer people choose, but the options they consider at all — people working from a flawed frame don't just reach wrong conclusions, they never see the right ones. A system that answers your first version of a question thoroughly is not neutral. It's locking in the frame you arrived with.

There's a thought experiment worth trying here, one that doesn't require a timer or a worksheet.

Next time you open an AI tool with something genuinely unresolved, write the question down somewhere else first — by hand, or in a plain text file — before you type it into the interface. Let it sit for a moment. Notice whether the thing you were about to ask is actually the thing you meant to ask, or whether it was just the first version of the thing. Then go ask it.

What you're doing in that pause is something the interface isn't designed to support: forming the inquiry before outsourcing the response. The quality of what comes back is almost entirely determined by the precision of what goes in. Precision requires a little discomfort first. Most products are built to skip that step.

Tristan Harris spent years as a design ethicist at Google before co-founding the Center for Humane Technology. His original critique was of social media: the way attention-harvesting design deteriorates our ability to focus, weakens relationships, and generates what he named "human downgrading" — an interconnected system of addiction, distraction, and polarization that strengthens as the path of least resistance gets smoother.

When generative AI arrived, CHT expanded its focus. Harris framed it as a second contact point between humanity and algorithmic systems — the first being social media's race to the bottom of the brain stem, the second being AI's race to roll out: to take shortcuts, to onboard as many people as possible as fast as possible, to treat deployment velocity as the primary measure of success. Different technology, same underlying incentive structure. The question of what social media was for — not what it could do, but what it was doing to the people using it — was never seriously asked until the damage was already visible. Harris's concern is that we're making the same category of mistake with AI, and faster.

That argument applies specifically to the design choices being made right now inside AI products. The people who built the first generation of generative AI consumer tools came largely from the same product cultures, the same metric frameworks, and the same foundational assumption that Harris was critiquing: that a faster response is a better response, that latency is always a cost, that the empty state is always a problem to fill. That assumption wasn't examined on the way in. It was just the water they'd been swimming in for a decade.

What gets optimized in AI products today: speed, capability, task completion, user retention. What doesn't get measured: whether users are thinking more clearly, asking better questions, or building the kind of judgment that compounds over time. Engagement is legible to a dashboard. Inquiry isn't. So we keep shipping engagement, and the question of what AI is actually doing to the people using it sits largely unasked — exactly as it did with social media, one contact point earlier.

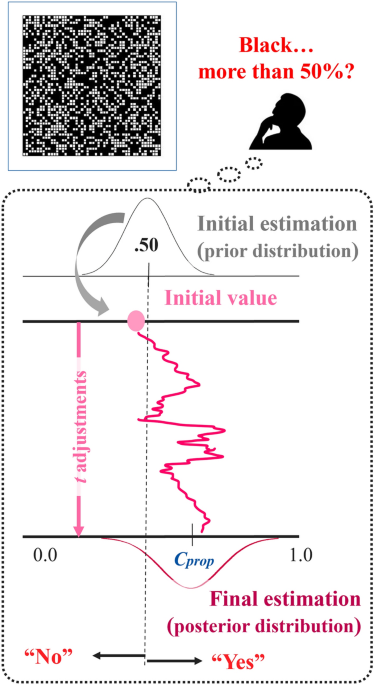

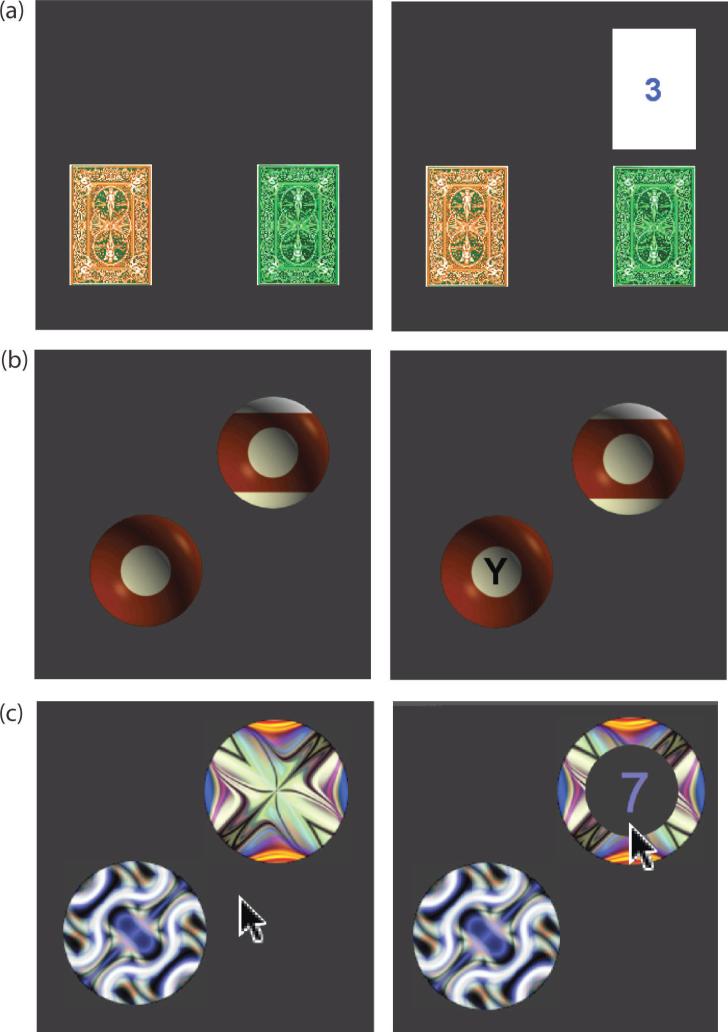

A 2025 study in Scientific Reports found that inserting even a brief pause before presenting decision alternatives — no instructions required, just the wait — "clears the mind of prior judgment bias, restores present attention, and prepares the mind for future judgments." The pause itself did the work. No feature, no prompt, no mindfulness widget. Just held latency.

Princeton researchers documented the mechanism underneath: humans have a consistent bias toward the less cognitively demanding option across a wide range of choice settings, what they call the "law of less work." We will reliably take the easier path when one is available. Design can exploit this. When the easiest path is also the fastest and the most available, you're not meeting users where they are. You're systematically routing them away from the harder work, the work that compounds into something over time.

Those routes get overgrown. The reflex to sit with a question before resolving it atrophies quietly. The answers keep coming. They're just shallower than they used to be, and you've stopped noticing the difference.

The kids were in the yard on Sunday because we waited. They didn't understand why we were planting in March, or why some trees take longer than others. But they watched two adults make space for something that wasn't ready yet, and they put their hands in the dirt anyway.

I don't think you can instruct a child to sit with uncertainty. I think you can only let them watch you do it.

What does a product look like that's designed for quality of inquiry instead of speed of response? I'm still working that out. Some of it is interaction design: when do you complete a thought versus hold space for one? Some of it is architecture: what does it mean to build a loop that surfaces the next question rather than the nearest answer? Some of it is instrumentation: what would you even measure to know if users were thinking better, not just faster?

The metrics don't exist yet because we haven't decided that's what we're building toward. Engagement is measurable. Inquiry isn't. So we keep shipping engagement.

The blueberry bushes won't produce for 2 to 3 years. We planted them anyway — for a version of this place, and this life, that we can only partly see from here. That's the whole argument, really. Some things only come to you after the wait.

Editor's note: Waiting is my Achilles Heel. Practicing the wait forces me to sit in my own discomfort, one minute at a time. When I finally connect, I push myself away back into the wait; it's both safe, but painful.