The Translator in the Room

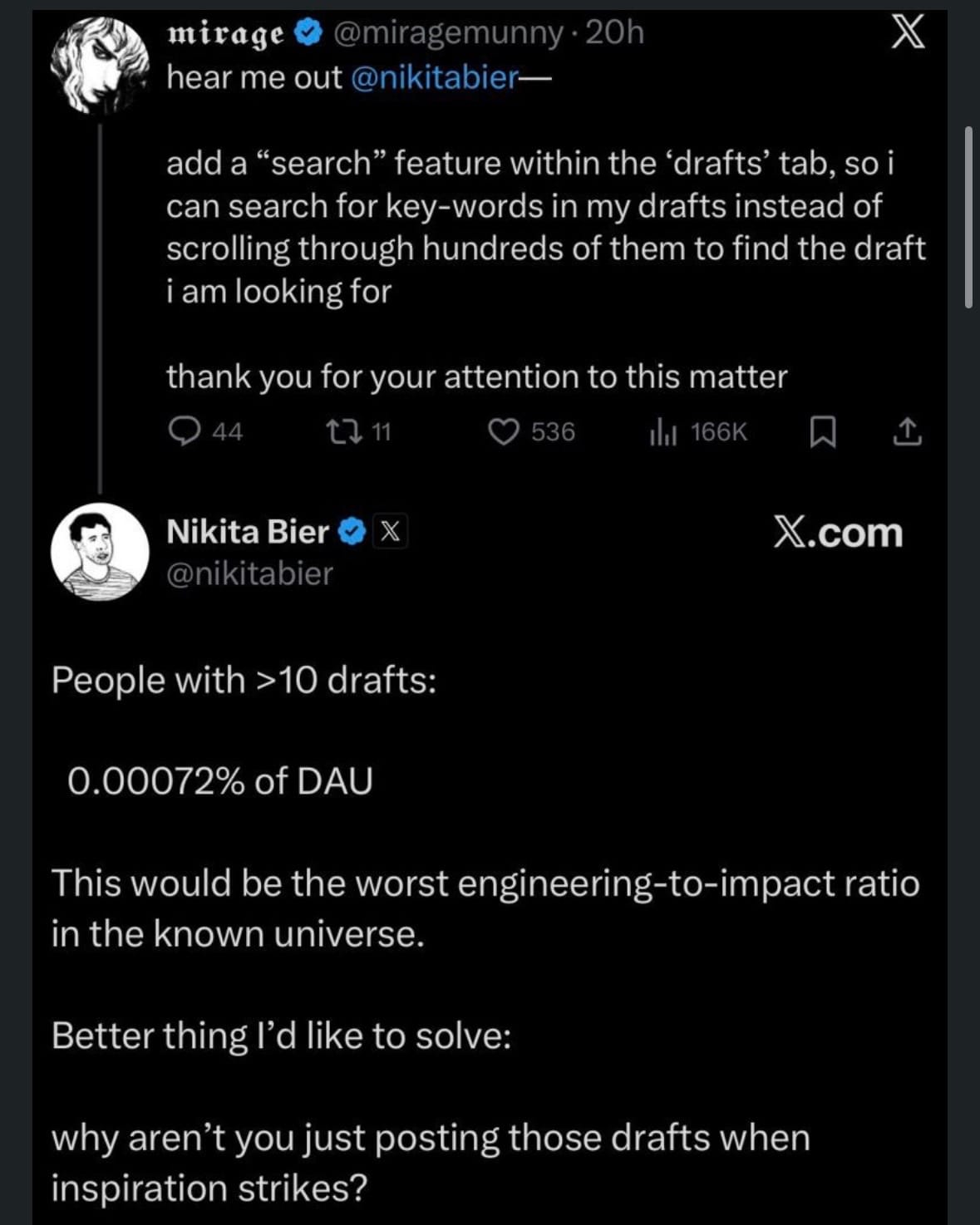

Aakash Gupta, a former VP of Product and one of the more widely read voices in the PM world, had flagged an exchange worth paying attention to in a LinkedIn post. An X user had asked Nikita Bier, head of product at X, for a feature: add search to the drafts tab so I can find posts without scrolling through hundreds of them.

Reasonable. Relatable. The kind of thing that gets logged, prioritized at a 3, and lives in the backlog until the next roadmap cycle.

Nikita's response: people with more than 10 drafts represent 0.00072% of DAU. "This would be the worst engineering-to-impact ratio in the known universe." Then, the actual question: why aren't you posting those drafts when inspiration strikes?

The problem underneath it was different than advertised. The user was struggling to publish, full stop. Search would have added a layer over a problem that needed a different solution entirely. A discovery problem on the surface. A friction problem underneath. Building the requested feature would have papered right over it.

(This is also, by the way, one of the more under-appreciated risks of AI-driven product slop. Automation without curation - whether by human or agent. Imagine that ticket coming in and an agent just implementing it, no interrogation, no pushback, no "wait, what are you actually trying to do." Now imagine that happening at scale, across every request, every sprint. Feature bloat compounds quickly. Yes, you could measure engagement over time and course-correct, but by then trust is already eroding. Much harder to earn back than it was to lose.)

I've seen this pattern my whole career. The specific memory I keep returning to is from early in mine.

I was working on predicting Medicare fraud and making that prediction actionable for call center representatives. The model was real, based on (then) poorly transcribed phone calls transformed into a mix of unstructured and structured data. I transformed language as data by applying dimensionality reduction, k-means clustering, and running a binomial regression. Back then, that was considered fancy. The team was proud of it as they should have been.

But I kept getting stuck on a different question. How does a call center rep use this within 30 seconds of a senior citizen calling in distress about a medical device they never ordered? How do you ease that caller's confusion, in real time, while also confirming information that might protect the next potential victim?

Human questions. And in too many of the rooms I sat in, we were asking every question except those. Is it really AI if it's a big decision tree? Will they think it's too simple if it's just a linear regression? So many conversations about the model. Almost none about the person on the other end of the phone.

The call center rep needed answers they could act on in the moment. Giving them predictive variables and now, parameter weights and prompt tuning, are answers to a different problem entirely. The right question was always about the person on the phone, and what they needed to do their job with care.

That's the lesson I learned early and have been relearning ever since:

It turns out I'm not the only one who's noticed this pattern. There's a whole field of research built around it. It's called Transformative Service Research, and it studies the relationship between how services are designed and what they actually do to the people receiving them. The measures that matter: real wellbeing outcomes, individual, community, societal. The kind of impact that lives upstream of a satisfaction score.

The foundational argument, developed by researchers at Arizona State and published in the Journal of Business Research, is that services have the power to uplift or harm the people they're meant to serve. The difference usually lives in one place: whether the human on the receiving end was genuinely the starting point. The actual person, with the actual problem, at the center of every design decision.

A 2022 special issue in the Journal of Service Research looked specifically at unintended consequences in service design. One finding stayed with me: when large datasets, analytics, and AI are used as the primary decision-making input, the harm tends to show up at the exact moment data displaces human interaction. While the model keeps performing, the conversation disappears. Trust erodes, care quality drops, and the people running the system often miss it entirely because the metrics still look fine. To me this is more of a flaw in the orientation, more than the technology.

So what does designing for the right person and the right problem actually look like in practice?

Most people, when they encounter new technology, aim their curiosity at the technology itself. That's natural. The technology is novel, and usually someone in the room is excited to explain it. What gets less airtime is the person on the other side.

Customer obsession gets framed as a sales value or a thing to do, but rarely practiced. It's really an epistemic one. It means wanting to understand the problem more than you want to demonstrate the solution. Those two orientations can look identical from the outside, right up until the moment they diverge (which happens more frequently than you may realize).

The part of this I think about most is where curiosity gets aimed.

Technical curiosity is real and valuable. Understanding how a system works, what its constraints are, where it breaks: that matters (and I quite enjoy it!). But technical curiosity with human curiosity underneath it, the two running together, is what produces things that are both impressive and actually useful. The technology knows how to do something. The person using it knows what they need.

The skill that bridges those two things is translation. Plain language when someone needs orientation. Technical depth when their curiosity opens up for it. Moving between those in real time, reading the room as you go, without losing the thread of either. People who are good at this are good at simultaneous attention. They hold what they know about the system and what they know about the person at the same time, and figure out which one to surface in the moment.

That's empathy in a technical context, and it's more learnable than people think. Three questions have served me well, whether I'm in a customer conversation or sitting with a request someone handed me:

- What are you trying to do, and what's getting in the way? The goal here is the actual problem, not the proposed solution dressed up as a request. People often arrive with a feature in mind. The work is getting to what's underneath it.

- What would good look like for you? This surfaces the real success metric, which is rarely the one on your dashboard. People know what relief feels like. They can usually describe it, if you ask.

- What have you already tried? The most underused question in discovery. It tells you where the person's mental model is, what they've already ruled out, and how much friction they've absorbed before they got to you.

These questions aren't novel and yet making them a reflex instead of a special occasion seems to be. Similarly, reading the room (and not being the loudest voice in a room) is a powerful skill to hone.

I was presenting outcomes from a client pilot recently. The energy in the Zoom was bright, engaged, genuinely curious and excited to hear a customer story. What I noticed was a specific kind of readiness: the intelligence was there, the curiosity was there, and what remained was practice. Getting closer to the customer. Hearing their voice directly. Learning to let that voice shape the work, not just inform it.

Try to read the room before anyone says anything. You may see the eyes that glaze over slightly. The furrowed brow. The long pause before the next question, the one where someone is trying to figure out what they're actually looking at. That pause is usually the first tell.

When I see it, I make a choice:

- If someone seems reluctant to admit they're lost, I share my own thinking instead, offer a handhold, try to bring them along without making it a thing.

- If someone seems genuinely curious but stuck, I ask them directly: what are you thinking right now? That question, more often than you'd expect, surfaces what they're actually trying to get out of the whole interaction. The real need, unfiltered.

Most organizations build teams that are excellent at solving problems. Fewer build teams that are practiced at finding the right ones. The more you practice it, the more instinctive it becomes to reach past the stated request toward the actual problem underneath.

Someone gives you something, money, time, attention, a referral, because you give them something they couldn't easily get elsewhere. Everything else, the technology, the roadmap, the model architecture, the OKRs, is scaffolding. The scaffolding matters, but only if you remember what it's holding up, and for whom.

References

Anderson, L., Ostrom, A. L., Corus, C., Fisk, R. P., Gallan, A. S., Giraldo, M., Mende, M., Mulder, M., Rayburn, S. W., Rosenbaum, M. S., Shirahada, K., & Williams, J. D. (2013). Transformative service research: An agenda for the future. Journal of Business Research, 66(8), 1203–1210. https://doi.org/10.1016/j.jbusres.2012.08.013

Blocker, C. P., Davis, B., & Anderson, L. (2022). Unintended consequences in transformative service research: Helping without harming. Journal of Service Research, 25(1). https://doi.org/10.1177/10946705211061190