The Dirty Pool

This essay is part of Deep Familiar Ground — a series on distributed nodes, earned trust, and the quiet work of building systems that don't fall apart when you're not watching. The companion piece is The Chain of Trust.

Why the Validation Layer is the Moat

In February 2018, Roche announced it was acquiring Flatiron Health for $1.9 billion.

That number got attention. What got less attention was what Roche’s CEO said about why. Not the software. Not the customer base. Not the EHR platform serving community oncology practices across the country. The reason, stated plainly in the press release: “regulatory-grade real-world evidence.”

Roche, the world’s largest biotech company and a company that develops cancer treatments for a living, paid $1.9 billion for the right to trust a dataset.

That’s worth sitting with. Especially now, when agentic AI tools are starting to do at speed what Flatiron’s data abstractors did by hand. The organizations with validated substrates underneath them are about to pull very far ahead of the ones that don’t.

What Flatiron Actually Built

Flatiron started in 2012 with a straightforward problem: oncology data was everywhere and usable nowhere. Electronic health records captured what happened to cancer patients, but in formats so inconsistent, so fragmented across systems, so full of unstructured clinical notes written in physician shorthand, that no one could actually learn from it at scale.

The insight wasn’t technical. It was operational: you can’t automate your way out of this problem. Not in 2012. Probably not now. Structured oncology data, the kind you can run a real-world evidence study on and the kind the FDA will actually look at, requires a human being to read a clinical note and extract what the physician meant.

So Flatiron built an army of data abstractors. Trained oncology data curators working through medical records, pulling structured signal out of narrative noise, record by record. Former FDA commissioner Robert Califf called it directly at the time of the acquisition: “relentless curation of data, an army of ‘data janitors’ transforming EHR data into analyzable, actionable information. Congrats to the Flatiron team, this was hard work paying off, not slogans and glitz.”

That’s the line. Hard work, not glitz.

By 2018, Flatiron had curated data from over 265 community cancer clinics and six major academic research centers. They had partnered with 14 of the top 15 oncology therapeutic companies. They had worked with the FDA to develop new standards for how real-world evidence could be used in regulatory decision-making. The EHR software was the mechanism. The curation was the moat. But it’s worth being precise about what the moat actually consisted of, because it wasn’t just clean data.

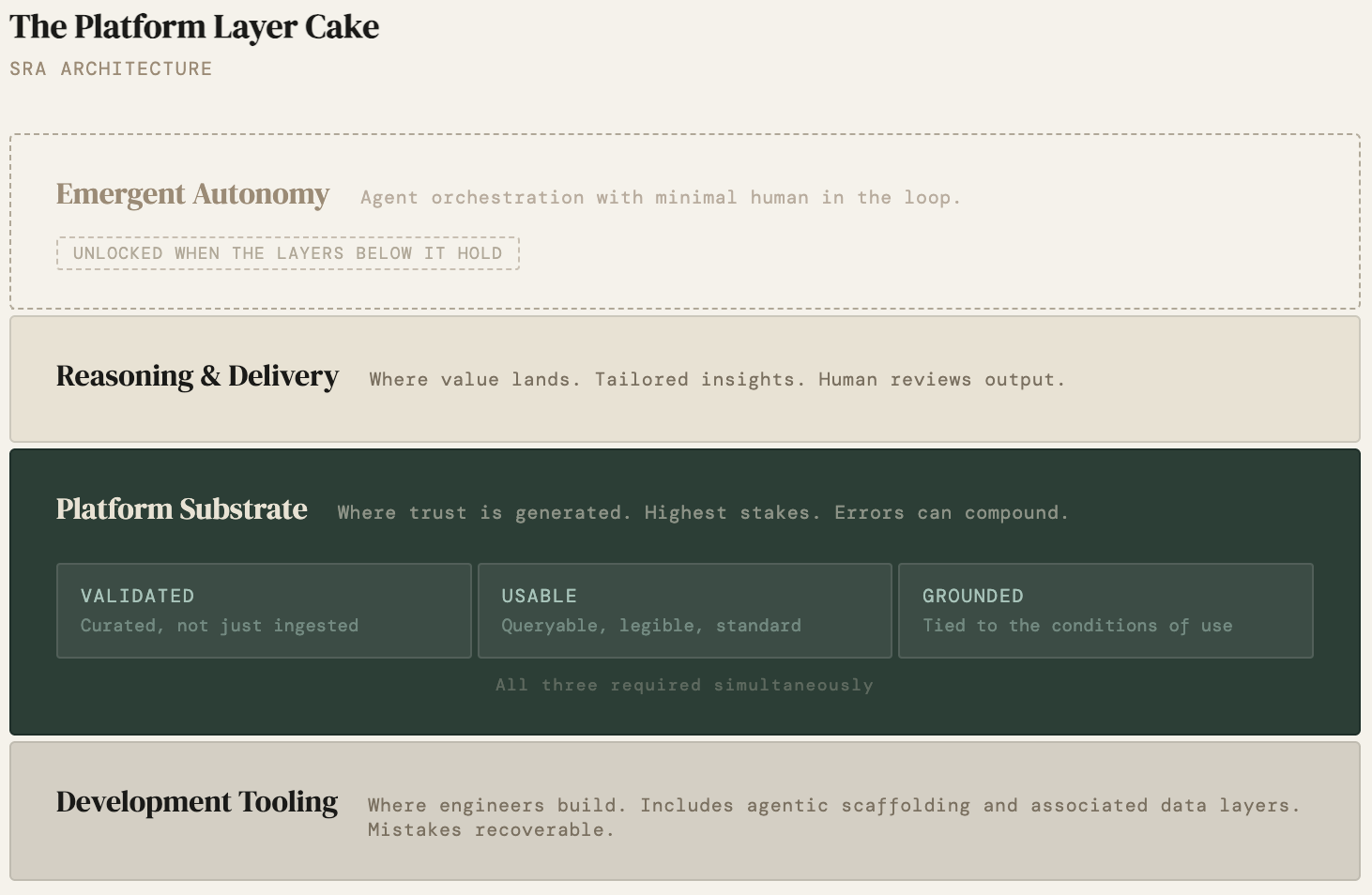

The previous essay described three buckets for where AI belongs in a platform architecture: development tooling, the platform substrate, and reasoning and delivery. Flatiron’s story is a case study in what it takes to earn the right to occupy the platform substrate at all. To do that, the data layer itself has to clear three separate bars simultaneously.

Flatiron built three things simultaneously, and all three had to be true at once. The data had to be validated: curated from clinical notes by trained abstractors, not just ingested. It had to be usable: structured to common standards, queryable by researchers who weren’t Flatiron engineers, legible to the FDA. And it had to be actionable: connected to the actual workflows where cancer treatment decisions get made, embedded in the practices and research centers where the questions lived. Remove any one of those and the other two don’t get you to $1.9 billion. Clean data that nobody can query is an archive. Queryable data that isn’t connected to a decision moment is a research curiosity. Actionable data built on unvalidated records is confident noise.

Roche didn’t buy a software product. They bought a chain of custody: a validated path from clinical observation to analyzable truth that no one else had built and that would take years and enormous operational investment to replicate. That’s what $1.9 billion bought: the right to trust the data underneath the insights.

The Other Side of the Pool

In 2024, the Centers for Medicare and Medicaid Services published an audit of Medicare Advantage plans. This audit uncovered that 52% of provider locations contained at least one inaccuracy.

Read that again. More than half of the records health insurers use to tell patients which doctors are in their network, records that determine whether someone’s care is covered, whether they can see a specialist, whether they end up with a surprise bill, were wrong in at least one field.

A startup called Certify, which builds provider data intelligence platforms, described the structural problem this way:

“Think about it as a dirty pool of water. Once all these disconnected inputs mix together, it is hard to know what is right and what is wrong.”

The dirty pool is what you get when you skip the work Flatiron did. Data arrives from credentialing systems, provider directories, claims data, roster feeds. Each source is authoritative about something. None of them are reconciled against the others. All of them mix together into a substrate that nobody fully trusts and nobody has the authority to clean up. The result isn’t just inaccurate records. It’s a system where downstream decisions about care access, billing, and coverage are being made on top of a foundation with no common reference point across the layers that depend on it. And crucially, it's invisible. Dirty pools look like clean pools until someone goes swimming.

The CMS audit made it visible. What it revealed wasn’t a technology failure. It was a structural one - no one owned the validation layer. No one had the mandate, the resources, or the operational model to establish and maintain a shared reference layer that credentialing, directories, claims, and care delivery could all orient against.

Here’s the thing about ground truth in healthcare data - it is rarely absolute. It’s relative to the layer consuming it. A provider record can be accurate for credentialing and wrong for network directories at the same time. Which means the real work isn’t finding the one correct answer. It’s establishing common ground across layers so each one can trust what it’s building on.

Without common ground, each layer drifts independently. Authoritative within its own context, irreconcilable across contexts, until more than half the entries have at least one error depending on which layer you ask. That’s the validation layer missing. It’s not a dashboard. It’s not a model. It’s the operational commitment to establish what’s true before you build anything on top of it.

The Asymmetry That Matters

These two stories are often told separately. Flatiron as a success story about oncology innovation. Provider directories as a compliance problem for health plans. But they’re describing the same underlying dynamic from opposite directions.

Flatiron chose to own the validation layer. It was expensive. It was operationally intensive. It didn’t look like a technology company’s core competency. It was, by any standard measure of startup resource allocation, the wrong place to put money. And it became the entire thesis of a $1.9 billion acquisition.

The validation layer is structurally nobody’s job. It’s a coordination problem masquerading as a data problem.

The provider directory problem is what happens when no one makes that choice. Not because the people involved were careless. Health plans have been trying to fix this for years. The validation layer is structurally nobody’s job. It’s a coordination problem masquerading as a data problem.

This asymmetry shows up reliably across healthcare. The organizations that treat data validation as a strategic investment rather than a compliance burden are the ones that accumulate defensible positions. The ones that treat it as overhead are the ones whose AI initiatives stall at the reasoning and delivery layer, because the model is only as trustworthy as what’s underneath it.

In the previous essay, I argued that platform products need a clean separation between the platform substrate, the reasoning and delivery layer, and the action surface (SRA). Collapsing those layers creates architectural debt that forecloses the agentic future. The healthcare vertical makes the SRA argument with unusual clarity, because the stakes are so legible. A wrong address in a provider directory isn’t abstract architectural debt. It’s a patient who can’t get to a covered specialist. A cancer treatment decision made on unvalidated staging data isn’t a model accuracy problem. It’s a person who may receive the wrong treatment because the system had no way to know what it didn’t know, a problem I’ve been thinking about since before my industry career.

The stakes in other industries are different but the dynamic is the same. Garbage in doesn’t just produce garbage out. It produces confident garbage out, presented in a clean interface, by a system that has no way to know what it doesn’t know.

What “Earning the Right” Looks Like

Returning to the previous essay's frame: the platform earns the right to build for emergence at the reasoning and delivery layer by doing the unglamorous work of correctness at the platform substrate first.

Flatiron earned it. They hired data janitors. They built curation workflows. They worked with regulators to define what “regulatory-grade” actually meant in practice. They spent years doing work that didn’t look impressive at a product demo and couldn’t be shipped as a feature. And when they showed up to the reasoning and delivery layer, when they built the analytics and evidence generation capabilities on top of that foundation, they had something no one else had: a dataset that the people making multi-billion dollar decisions could trust. That’s what the Roche acquisition was buying. Not software. Trust in a substrate.

The organizations that will have defensible AI positions in healthcare over the next decade are the ones making the same bet Flatiron made: treating the validation layer as a strategic asset rather than a cost center, building the operational infrastructure to keep it accurate, and resisting the temptation to skip to the reasoning and delivery layer before the foundation is ready.

The ones that skip it will have dashboards. Flatiron had a moat.

The Business Existential Question

If you’re building in healthcare, or in any vertical where data quality determines whether downstream decisions are trustworthy, the diagnostic question isn’t whether your model is good. It’s whether your data substrate has earned the right to support it.

A few ways that question tends to resolve:

- You own the validation layer. You’ve invested in the operational infrastructure to keep data current, consistent, and auditable. You know where your data came from, what transformations it’s been through, and what its failure modes are. When something goes wrong, you can trace it. This is expensive. It’s also the only position from which you can confidently build toward autonomous action.

- Someone else owns it for you. You’re buying into a validated substrate: a Datavant linkage, an Arcadia data platform, a Flatiron real-world evidence network. You’re not building the moat, but you’re building on top of a real one. The risk is dependency; the benefit is speed to a trustworthy foundation.

- Nobody owns it. You have data. You have sources. You have something that looks like a substrate. But the validation layer is diffuse, spread across systems, reconciled by nobody, cleaned up when someone notices a problem. This is the dirty pool. It’s the provider directory problem. It’s the most common state. And it’s the one where the AI use cases you’re excited about will keep stopping just short of trustworthy.

The good news is that the dirty pool is fixable. The harder news is that fixing it doesn’t look like a product launch. It looks like what Flatiron did: years of unglamorous operational investment, an army of people doing work that’s invisible when it’s going well, and a relentless commitment to correctness before cleverness. That’s what the moat is made of and for the first time, the timeline to building it is starting to compress.

Timelines Are Compressing

The calculus for organizations starting this work now is different than it was for Flatiron in 2012. Not because the work is less real. The tools available to do it have changed. The unglamorous work is getting faster.

Atropos Health, a Stanford spinout founded in 2020, built its platform, Geneva OS, on top of a federated network of de-identified real-world patient records. The substrate work came first: curating, harmonizing, and governing hundreds of millions of records from EHRs, claims data, and patient registries into something trustworthy enough to run publication- grade observational studies against. That foundation took years. But once it existed, what they could build on top of it changed dramatically.

In October 2025, Atropos deployed an agentic Evidence Agent at Stanford Health Care. The agent reads a patient’s chart, identifies the clinical question implicit in the encounter, runs a real-world evidence study against the data network, and surfaces a statistically grounded answer without the physician asking. Studies that traditionally take weeks or months are generated in roughly 20 seconds. The agent is doing what Flatiron’s data abstractors did, but at a different speed and scale, against a substrate that was already governed and validated.

validated foundation first, agentic capability second

This is the sequence that matters: validated foundation first, agentic capability second. The agents don’t make the dirty pool clean. They make a clean pool dramatically more productive.

There’s a second dimension shifting alongside speed: audit-ability. One of the persistent objections to relying on AI in high-stakes healthcare decisions is that you can’t see what the model is actually routing through. You can’t tell whether it’s drawing on your validated context or drifting toward unrelated heuristics baked in during training. That’s starting to change at the architecture level with researchers experimenting with routers, context-carriers, and data chains across all system levels. It’s early, but the direction is significant. Governance of AI outputs may soon be possible at the concept level, not just the prompt level. For healthcare platforms building on validated substrates, that’s the architecture that makes the chain of custody complete.

For organizations weighing whether the validation investment is worth it - the floor on what’s possible once you have a trustworthy substrate keeps rising. Agentic tools are accelerating the return on foundational data work. Interpretability research is making that work auditable in ways that weren’t conceivable in 2012. The operational investment is still real. The timeline to value is compressing. Get moving.

References

Califf, Robert M. [@califf001]. “People should pay attention to the strategy, relentless curation of data, an army of ‘data janitors’ transforming EHR data into analyzable, actionable information.” Twitter/X, February 16, 2018.

Centers for Medicare & Medicaid Services. Medicare Advantage Provider Directory Audit Findings. U.S. Department of Health and Human Services, 2024.

Atropos Health. “Atropos Health launches agentic AI for clinical evidence at Stanford Health Care.” October 28, 2025.

Fierce Healthcare. “Roche considering sale of cancer data startup Flatiron Health: report.” August 8, 2024.

Digital Health Insights. “Health plans turn to new data platform as errors and compliance risks pile up.” December 12, 2025.

Roche. “Roche to Acquire Flatiron Health to Accelerate Industry-Wide Development and Delivery of Breakthrough Medicines for Patients with Cancer.” Press release, February 2018.

Roche. “Roche completes acquisition of Flatiron Health.” Press release, April 2018.