The Chain of Trust

This essay is part of Deep Familiar Ground — a series on distributed nodes, earned trust, and the quiet work of building systems that don't fall apart when you're not watching. The companion piece is The Dirty Pool.

There’s a conversation that surfaces in teams building platform software with enough regularity to suggest it’s not a team problem. It’s a structural one. Splunk had this conversation in the mid-2010s. They had built one of the most powerful data ingestion and indexing engines in enterprise software: machine data at scale, fully searchable, deeply integrated. And their users still couldn’t reliably get from data to decision. The platform worked. The value didn’t land. What they had was a world-class model layer and an insight layer that spoke the language of engineers rather than the people who needed to act. It took years of product evolution – dashboards, alerting workflows, eventually Security Information and Event use cases designed for security analysts rather than log wranglers – to close that gap. It’s the missing controller.

Not the controller in the narrow MVC sense, though we’ll use that pattern as a lens. What’s missing is the layer of connective tissue between what the system knows and what the user needs to do. Every platform product begets a consumption layer: create/consume, build/use, enable/act. The failure to design that last layer as a first-class architectural concern is where enterprise platform products quietly break down, in ways that tend to show up late, diffusely, and often at the worst possible moment.

MVC Problem, Generalized

Model-View-Controller is a design pattern that’s been around since the 1970s. At its core it encodes a single discipline: don’t collapse your concerns. The model holds the data and business logic. The view renders that data for a human. The controller handles input and tells the model what to change. Each layer has a distinct contract. The model doesn’t know how it’s displayed. The view doesn’t know how data is stored. The controller doesn’t embed business logic.

What makes this elegant isn’t just clean code. It’s that the separation creates observability. You can ask, at any point in the chain: why did this output? Where did this state come from? What would I need to change to get a different result? The answer is locatable. There’s a chain of custody.

When that separation collapses, you lose the ability to ask those questions. Logic bleeds into the view. The model starts encoding display assumptions. The controller becomes a sprawl of conditional branches that reflect undocumented product decisions. The system still functions – for a while – but you’ve traded legibility for velocity, and at enterprise scale, that trade comes due.

The same principle applies to any platform product with a substrate layer (data, models, validated structured information) and a delivery layer (insights, recommendations, outputs a human can act on). Designing the delivery layer as a direct expression of the substrate produces the same failure modes. You lose observability (nobody can explain why the dashboard showed that number). You lose audit-ability (nobody can confirm the data was ever correct). You lose iteration velocity (fixing one thing means touching everything).

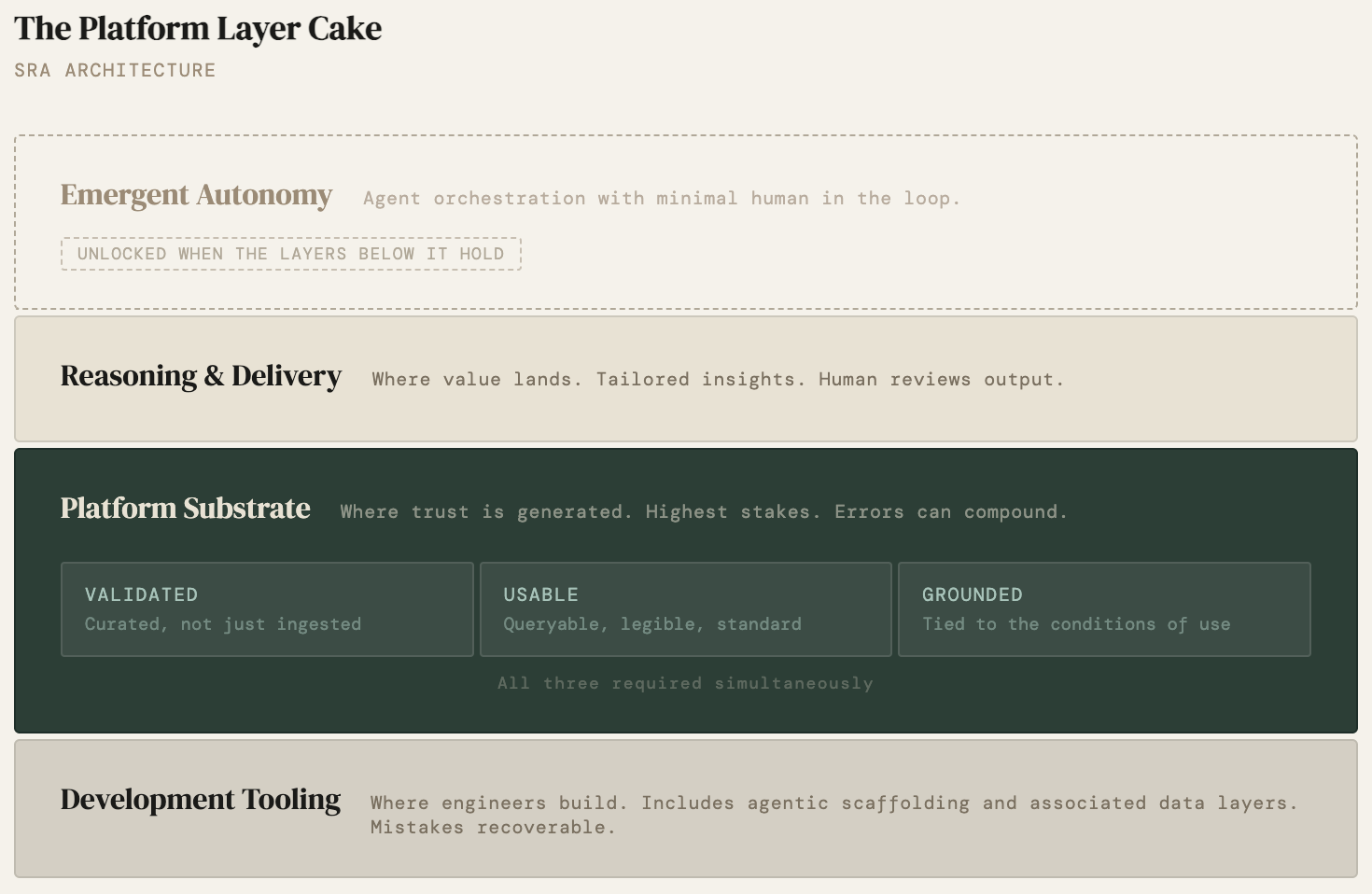

Instead, we introduce proper separation of concerns by defining specific domains. I call this the SRA pattern:

Substrate, Reasoning & Delivery, Action

The Substrate is the authoritative, validated state including data models, provenance, lineage, ground truth. The Reasoning & Delivery layer is interpretation and synthesis over that substrate, translated into outputs a human can act on. They come as insights, explanations, recommendations, and drafts. The Action layer is the controllable surface where humans and agents can safely change state. You can run workflows, add guardrails, review, approve, and publish. Each layer has a distinct contract. Each has different correctness requirements. In AI-native platforms especially, the Action layer is not just a workflow UI. It is an inline control surface that enforces a chain of custody between substrate and outcomes: traceable inputs, attributable reasoning, steerable interventions.

I watched this problem get solved by design early in my career, before it had a name. At Narrative Science, the architecture was deliberately split: one system decided what to say, another decided how to say it. That seam was intentional. Every output was explainable because the layers were never allowed to collapse. (More on that architecture here.)

Guide Labs’ work on concept-interpretable models at 8B parameters illustrates why that chain matters: their architecture forces predictions through explicit concept modules, making it possible to trace which concepts fired and whether the model routed through validated context. Audit-ability doesn't have to be a reporting feature bolted on after the fact in this approach. Even in the lowest layers, provenance is baked into the architecture. It's still early, but this speaks to the need for systems that can reliably emit their origins.

Mapping the Domain Before You Build for Emergence

This is where the conversation about AI in platform products tends to go sideways. AI as a category in enterprise product development conflates at least three distinct problem spaces, each with different architectural requirements, different correctness criteria, and different failure modes. Treating them as one category produces muddy decisions, missed timelines, and errors that compound from a foundation that was never properly validated.

The three buckets:

- Development tooling (the build layer) covers the use of AI to accelerate the engineering work of building the platform itself. GitHub Copilot, Cursor, Claude Code: development-time tools that operate outside the product’s data model. Their outputs are reviewed and merged by engineers. The quality bar is code review and testing. This bucket has the most latitude for experimentation because mistakes are caught before they reach production and the feedback loop is tight. One of the most common sources of organizational confusion is applying the loose correctness standards appropriate here to the layers where they don’t belong.

- Platform Substrate covers the use of AI to build, validate, or reason about the data model itself. This is the highest-stakes bucket and the most constrained. The substrate is the foundation everything else sits on. A mistake here doesn’t stay local – it compounds to every consumer of the model. This is where the choice of which kind of AI matters enormously. A deterministic SQL query may be the right tool for constructing a core data record. Not because AI couldn’t generate one, but because determinism is a correctness requirement when you’re building a block everything downstream depends on. An orchestrator might be appropriate for assembling and tuning a data payload, but only after you’ve defined what correctness looks like for that payload. Garbage in at this layer doesn’t stay in this layer. It travels.

- Reasoning & Delivery covers the use of AI to analyze, interpret, draft, and deliver outputs to end users. This is where emergence becomes relevant, where you can afford more flexibility and composability, where an agent drafting a summary, generating a chart, or assembling a recommendation is operating on validated data and producing outputs a human reviews. The tolerance for imprecision is higher, the creative surface is broader, and the constraint is clear: you can automate and orchestrate nearly anything in this layer, as long as the substrate underneath is correct. Eventually, whether human or agent, this is where the end user is closest to value delivery.

Action shows up as the seam between understanding and execution. Trust shows up as the act of doing (making a purchase, diagnosing a patient, investing in a fund). You exchange time, money, or attention in exchange for something you believe is of value. Returning to this part of the system indicates a value feedback loop. An aligned system.

You can build for all three simultaneously – in a competitive market you probably have to. But building for all three without being explicit about the principles governing each is how you get stuck. Decisions made at the platform substrate bleed into the reasoning and delivery layer. Assumptions about correctness appropriate for action get imported into the substrate. The layers collapse, and with them the ability to reason about what went wrong and fix it.

The Last Mile Is Where Value Lives

Salesforce understood this early and built a business model around it. The CRM data layer – accounts, contacts, opportunities, activities – is the model. The Einstein analytics layer, the flow builders, the dashboard views – these are the controller and view surfaces. When Salesforce acquired Tableau in 2021, they weren’t buying a data company. They were buying an insight delivery layer: a way for users to translate structured operational data into reasoning they could act on. The acquisition was an acknowledgment that the model layer, no matter how mature, cannot deliver the last mile by itself.

The cautionary arc of legacy BI platforms in the late 2010s lands the same point from the other direction. Many had extraordinarily robust data layers – validated, governed, well- architected. But the delivery surfaces were designed by engineers for engineers. The reasoning and delivery layer reflected the structure of the data, not the reasoning patterns of the people who needed to act on it. Adoption stalled. When that layer doesn’t exist in a form users can work with, they build their own outside your system – and value capture goes with them. The model was correct, but product wasn’t.

The research on what this costs is unambiguous. ISACA’s 2022 State of Digital Trust survey found that organizations with low digital trust report less reliable data for decision-making (53%) and direct negative impact on revenue (43%). Trust is not a UX concern layered on top of architecture. It is downstream of architecture. The question "do I trust this system?" traces back directly to whether the chain – platform substrate to reasoning and delivery to action – is legible and verifiable at each step.

The delivery layer is only as defensible as the foundation underneath it. An operator can automate, orchestrate, draft, and deliver confidently if and only if the substrate is verified. The most sophisticated action surface in the world, running on a dirty or unvalidated model, doesn’t produce better insights faster. It produces wrong answers at scale, with confidence.

The platform earns the right to build for emergence by doing the unglamorous work of correctness first.

Opinionated Flexibility

Proper separation of concerns creates something valuable and dangerous in equal measure: flexibility. When the platform substrate, reasoning and delivery, and action layers each have clean interfaces, different interaction patterns become possible at each layer. You can expose the substrate to integrations – API, SDK, MCP, choose your architecture – without touching the reasoning and delivery layer. You can redesign the action surface without destabilizing the substrate. You can support both UI and agentic interactions against the same foundation because the platform’s contracts are stable enough to be depended on.

This flexibility is the precondition for what Kasey Klimes calls design for emergence – the paradigm shift from designing fixed solutions for known users to designing composable systems that users can adapt to unanticipated needs. In Klimes’s framing, user-centered design retains the assumption that the designer controls the outcome; design for emergence gives users control while the designer controls the combinatorial grammar: the alphabet and the rules for joining, not the sentences. The results may genuinely surprise the designers. That’s the signal that emergence is working.

But Klimes wrote that piece in 2022, before the agentic layer entered the picture seriously. Design for emergence in 2026 looks different because the user who composes and adapts the system is no longer always human. An agent navigating a platform’s API is, in a meaningful sense, a long-tail user with highly specific, contextual needs the platform’s designers (likely) never anticipated.

The same design principles apply: low floor (the agent can do basic things without complex configuration), wide walls (many possible interaction paths), high ceilings (sophisticated workflows are possible for capable orchestrators). The correctness requirements are asymmetric across the three layers and is worth noting. Emergence is appropriate and generative in the reasoning and delivery layer. In the platform substrate, where the data model is being constructed, emergence without guardrails produces exactly what we’ve been trying to avoid: confident wrongness at the foundation. Note that research to understand indeterminate agent behaviors is still on-going. Even with this approach, we will need to discover new guardrails (see: Agent Drift: Quantifying Behavioral Degradation in Multi-Agent LLM Systems Over Extended Interactions)

The SaaS examples that have made composability work at scale illustrate where emergence belongs. Notion’s content block architecture enabled a $10 billion company to serve a radically heterogeneous user base, each adapting the system to contexts Notion’s team never anticipated. Airtable built a relational data model with configurable views that non- technical users can adapt without engineering support. What both share is a stable foundation – the content block contract, the relational schema contract – on top of which emergence is permitted and encouraged, but also an opinion that makes them sticky. For Notion its about documents as databases, for Airtable its about tables as interfaces. Flexibility without that opinion is not a product. It’s a development environment. The emergence happens above the foundation, not within it, and it’s oriented toward an outcome.

Three Questions as a Diagnostic

The three questions a user asks of any platform product are deceptively simple diagnostics for whether the architecture is sound.

- Do I trust this system? That’s a substrate question. Is the foundation clean, consistent, and verifiable? Can you trace an output back to its source and understand why it’s true? (What makes a data substrate actually trustworthy – and what happens in healthcare when nobody does that work – is the subject of the companion piece to this essay.)

- Am I getting what I need? That’s a reasoning question. Is the delivery layer designed for the user’s reasoning, or for the system’s internal logic? Does it translate validated data into the kind of understanding needed to act?

- Can I course-correct? That’s an action question. Is the action surface expressive enough to steer toward what’s needed? Can you provide feedback, modify your path, or redirect the system when its outputs don’t serve you?

When all three layers collapse into one, users can’t distinguish between the data is wrong, the insight is misframed, and I don’t know how to steer. They experience diffuse mistrust – which is among the most expensive failure modes in enterprise software because you can’t debug it. You can only rebuild credibility slowly, by reestablishing each layer of the chain.

This is the ouroboros that keeps appearing in platform product strategy. The problem of value delivery keeps consuming itself because organizations solve the action problem by piling more onto the substrate, or solve the substrate problem by building workarounds in the reasoning layer. The boundary between them is the thing worth protecting. Not as an architectural formalism, but as the precondition for trust, for value, for the kind of autonomy that makes agentic systems worth building in the first place.

The controller is not just a design layer. It is the surface where human agency meets system intelligence. Design it well, and you’ve built something users can rely on, adapt to their needs, and eventually – carefully, with eyes open – begin to share with agents that can act on their behalf. Design it carelessly, and you’ve built a system that works fine until it doesn’t, in ways nobody can explain, at the worst possible time. It’s a design choice made every sprint.

References