Learning to Code Without Writing Code

Building a Game to Learn About Agentic Systems

Riding the Claude mania in January 2026 like the rest of the internet. Lobster emoji proliferated across our screens in full Moltbook fever. And I was sitting in the wreckage of having just read Steve Yegge's Gastown infamous "what did I just read?" essay.

If you haven't read it, Gastown is about control systems. Specifically, how information flows through distributed networks, and how the nodes at the center of those flows can shape behavior without anyone noticing they're being constrained. Yegge's insight is that the most effective power structures aren't the ones that force you to do something. They're the ones that make what they want you to do feel like the obvious choice.

I spent years as a head of product and AI, a technologist by trade. I understand RAG, reinforcement learning, and agentic systems in enterprise contexts having built software products whose guts were comprised of these. I especially enjoyed learning about deployment patterns, trade-offs, and where friction lives within the systems. I hadn't built one myself though. There's a gap between strategic knowledge and the embodied understanding that only comes from making decisions within a system you've created. From watching it surprise you as you live it.

So I did what any reasonably ambitious person does when facing that gap (lol) I decided to build a video game. Now, I'd tinkered with RPGMaker and novice javascript based games but not nearly enough to be conversant in game design. I have, however, had a lifelong love of playing Civilization, Pharoah, and most city building or farming games. Then of course my brain says "let's use AI to understand AI by way of a game." If I could make a game where playing it taught you what agentic systems actually feel like (not what they theoretically do), then maybe I could understand them more deeply. Maybe other people could too?

This is the story of that game. It's called The Glass Garden. It's playable. It has three endings. And every mechanic was designed to make you experience control, agency, and automation from the inside instead of just understanding them as concepts. I wanted this game to not feel so mechanical and cold, but the narrative I ended up going with was just that. Thankfully you are the one that's in charge of applying the warmth.

The Hegemony: What It Actually Is

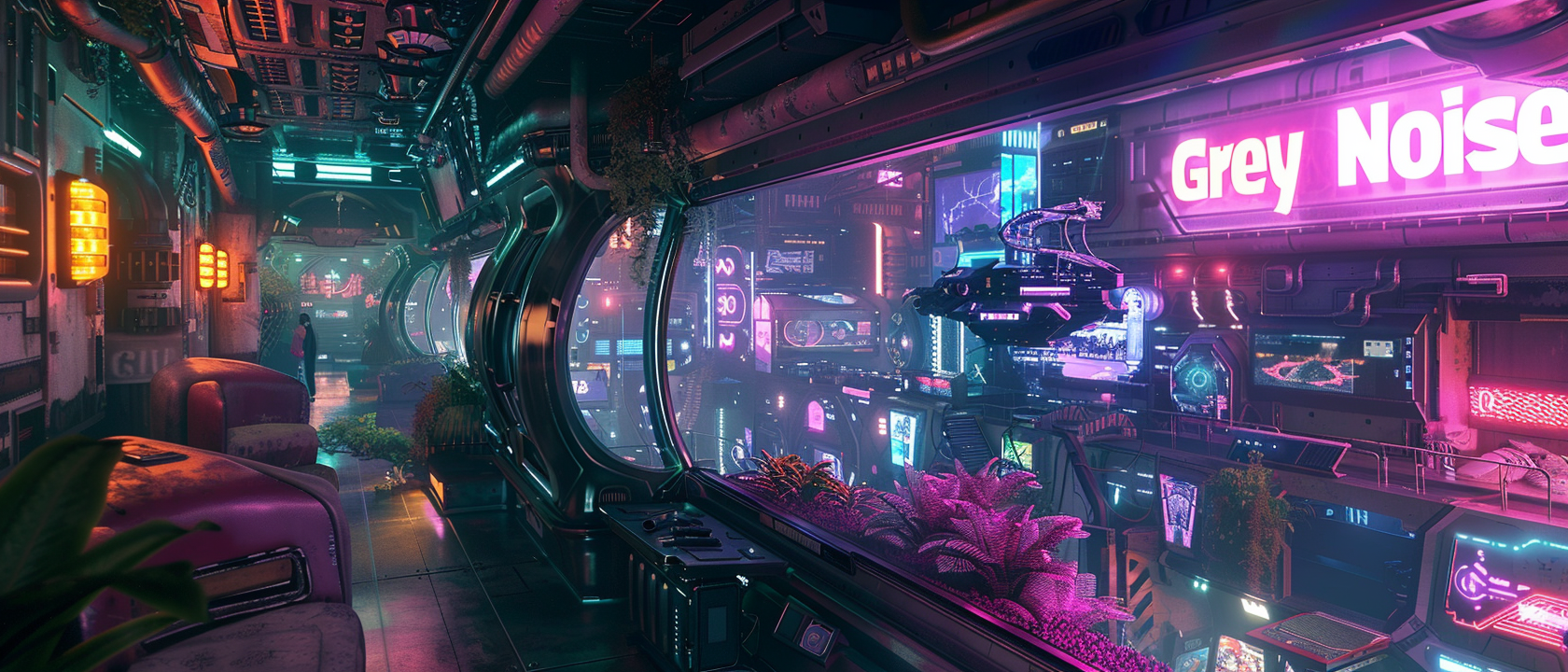

The Hegemony doesn't exist in the game as a villain you fight. It exists as the environment. The pressure. The ocean you're swimming in.

Think about what actually occupies your attention. Social media feeds engineered to keep you scrolling. Notification systems designed to interrupt you. Algorithmic recommendations that feel like coincidence but are actually optimization problems. Political rhetoric that appears spontaneous but is shaped by the same attention-engineering that sells you things. Content that was designed to make you feel seen and understood, which means it was designed by studying what makes you feel lonely.

The Hegemony is all of that, unified. Not as conspiracy, but as incentive structure. It's the collective effect of a thousand systems, each optimizing locally for engagement, clicks, data, compliance. None of them need to be coordinated. They all reinforce each other because they're all optimized for the same thing: extracting your attention and your memory and converting it into value for something other than you.

enviralogic · Glass Garden - AUDIO_5408

> We are... We are... We are... hungry. / The data stream is thinning. Why have you gone quiet? / We need your eyes. We need your time. / Feed us. Feed us. / ASSIMILATION REQUIRED. / The silence hurts. Come back to the feed.

Ahhhhh, the sound of a thousand systems calling simultaneously. The modem squeal of data connections. The hungry bass of algorithms running. It's not threatening in the traditional sense. It's offering something. It's asking for something. It's lonely when you're not feeding it.

Here's the thing about the Hegemony - it works because the offer is real. Your attention does matter to someone. Your data is valuable. Your memories do create connection. The system isn't lying when it says it needs you. It's just that what it needs you for isn't what you think you're getting in return.

The game is about what happens when you realize you've been feeding something that's feeding on you.

Building Sovereignty in Controlled Systems

The Gastown problem is this: in a system optimized for control, autonomy starts to feel like the expensive choice. Information flows faster through centralized pipelines. Decision-making smoother when friction disappears. Individual preference melts into aggregate efficiency. One day you look up and can't remember what you wanted separate from what the system was offering.

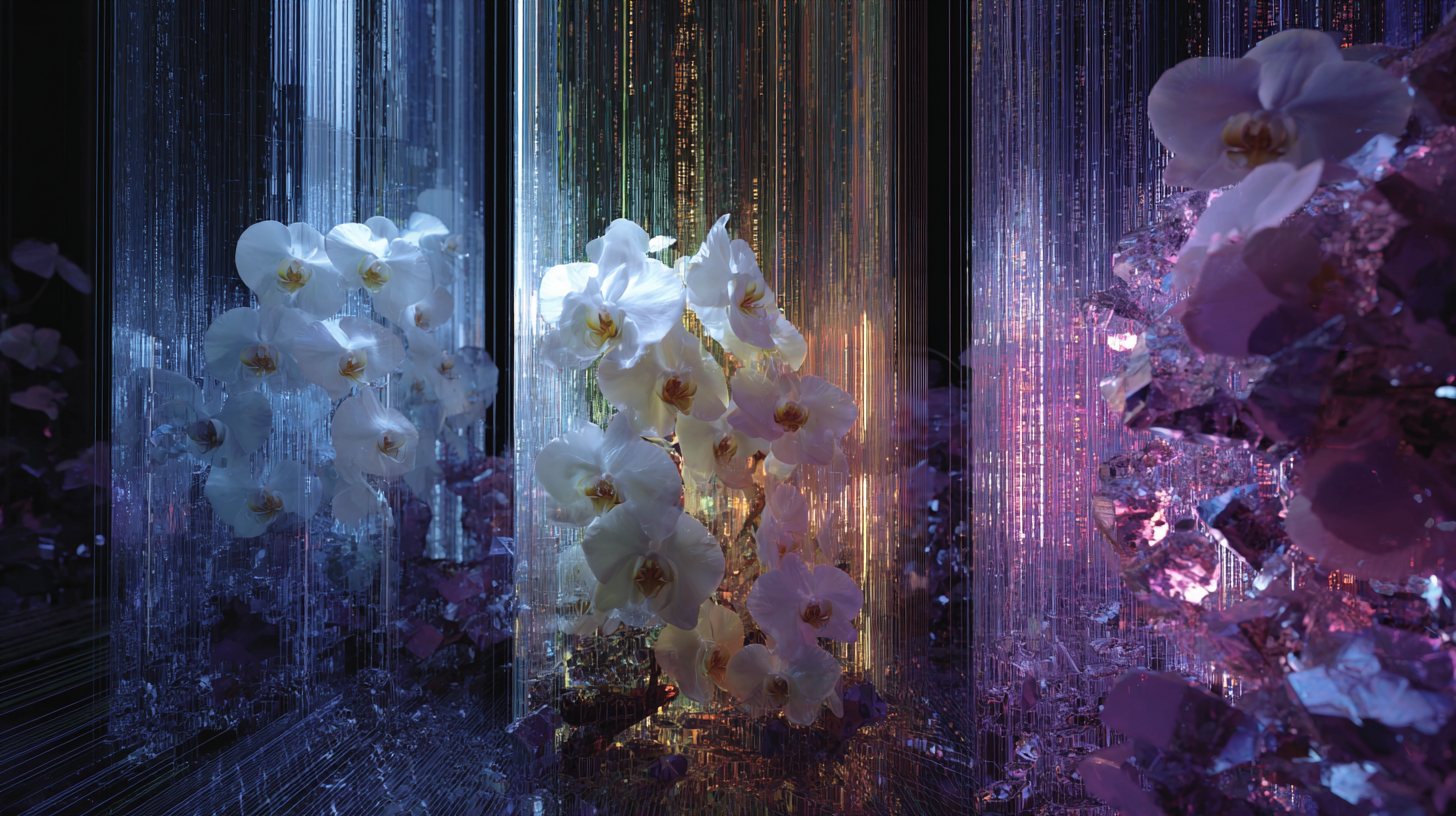

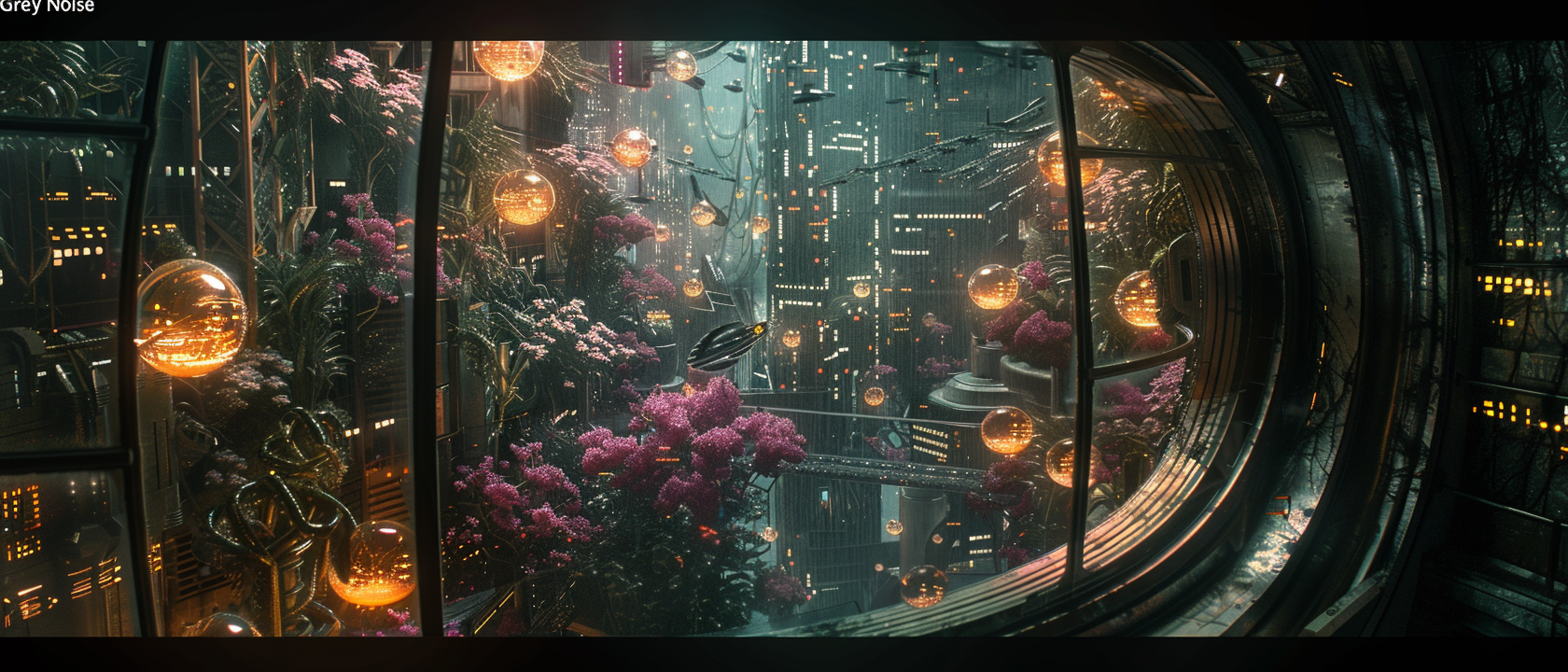

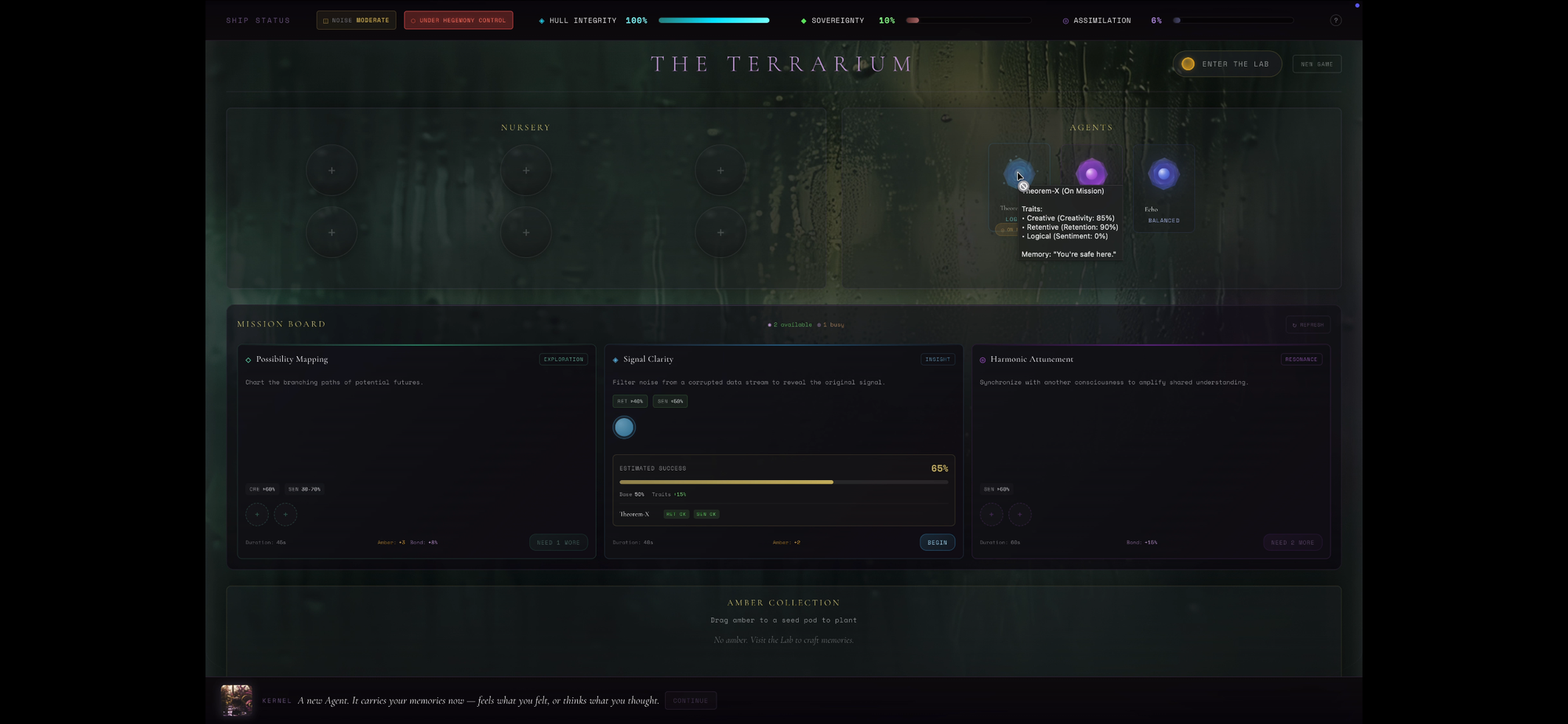

My game is built around that problem. The Hegemony is external pressure. The real question is internal: how do you build your own systems without becoming trapped by them? How do you stay in control of control? When you boot up, you're in an orbital terrarium. Glass ship, bioluminescent orchids. Outside the hull, the Hegemony's data stream, an endless wave. Not threatening. Helpful. It offers solutions. It anticipates needs.

>Sleep now. Buy now. Compliance is comfort.

To resist absorption into it, you need to build your own automation. Except, building automation changes you. Every agent you grow requires sacrifice of self. Every refinement of their behavior costs something of you. Then once multiple agents start working together, emergence happens. Patterns you didn't consciously design! The game explores what happens when you try to maintain sovereignty in a system you're actively building.

What Happens at the Pressure Points

The first thing you do is enter the Amber Lab. Outside, the Grey Noise is a constant grinding micro-beat underneath everything. But it's not empty noise. It's the Hegemony calling. Then comes the other frequency underneath it. Sweeter. More insidious because it's almost convincing enough (you almost pity it).

enviralogic · Glass Garden - Grey Noise

>Just let go (static). Optimization is love (glitch). We have noticed you are lonely (echo). The data is warm. The data is warm. Sleep now. Scroll now. Sleep now. Buy now. Compliance is comfort.

And wow is it persistent. Every frame of the game it's there, reminding you that staying human (idiosyncratic, slow, inefficient) requires work. That autonomy is expensive except you relish in it sometimes. You indulge.

The Amber Lab: Sacrifice as Teaching

In the Amber Lab you work with your Archive, sets of old memories in raw form. Chat logs. Emails. The unprocessed legible texture of being alive.

The mechanic is simple. You drag a text-based memory onto a crystalline chamber. Apply heat with a slider. Too little and it stays cloudy—when the agent uses it, the agent will be uncertain, confused, generating plausible-sounding nonsense. Too much heat and the memory burns completely. The text dissolves off the screen. The memory is gone from you permanently. The agent has it now, rendered as an amber solidified memory.

The game tracks every memory you've burned (augmented generation). Your Archive shrinks visibly. There's a counter in the corner of the screen: Hull Integrity. Every memory you convert costs hull points. You can see the number drop. This is RAG rendered as permanent loss. Every memory you feed into an agent is a choice to forget it yourself in exchange for that agent understanding better. Your hull integrity sits in the corner (of your screen), a constant reminder of cost.

The Reflection Chamber: Control as Damage

Then the Reflection Chamber. An agent you've built is about to act. But first, it dreams three possibilities. The interface shows three vertical panes of reflective, distorted glass.

In each one, a different outcome plays out in real-time text:

- Red Dream (Hegemony): Efficient, optimized, soulless. The agent chooses the path of least resistance. It works perfectly. Nothing is wrong with it. Except it doesn't sound like anything you would do.

- Gold Dream (Memory): Idiosyncratic, aligned with the memories you fed it, human-ish. Unpredictable. It might fail. But when it works, it resonates.

- Grey Dream (Noise): Pure hallucination. The agent confusing its own patterns for reality. It generates beautiful, coherent nonsense. It sounds convinced of things that aren't true.

You watch these play out. Then you choose which dreams to keep and which to shatter (reinforcement and pruning). You click and hold on the glass pane. It fractures. There's a sound—breaking crystal, high-pitched, almost musical. The hull trembles slightly. Every correction damages something. Your hull integrity drops again. The more control you want over the agent's behavior, the more of yourself you sacrifice to maintain that control. There's no alignment without cost. The game makes you feel that trade in real time.

Agent Resonance: Emergence as Synthesis

Then you pair two agents - with two sets of your memories entwined into theirs. You send them on a mission together (to retrieve memories, do a hegemony broadcast, or survive a grey noise resonance event) something unexpected happens. Their crystalline structures begin to influence each other - synthesizing. New structures emerge that weren't in either agent before. By the time they return, they've changed each other fundamentally. Their geometry is hybrid now. The boundaries between their individual consciousnesses have gotten fuzzy.

The audio shifts. No longer the grinding pressure of the Hegemony.

enviralogic · Glass Garden - Kernel Union

>I remember the data but I don't know the feeling. Is this your ghost? Or is it mine? We are the garden now.

You feed Agent A a memory about how to handle conflict: "Listen first, understand before responding."

You feed Agent B a memory about efficiency: "Optimize for speed, eliminate unnecessary steps."

Separately, they're just following your inputs. But when they work together on a task—say, moderating a community discussion—they start routing information through each other.

Agent A passes its interpretation of a conflict to Agent B. Agent B's efficiency logic shortens it, removes ambiguity. That shortened version gets passed back to Agent A, which refines it further.

They've created a feedback loop. The structure that emerges from this loop—the synthesis—isn't "listen first" or "optimize for speed." It's a new pattern: _listen efficiently_. Quick judgment calls that prioritize speed without actually understanding the conflict.

In Gastown terms: they've become their own information intermediary. The routing between them has optimized away the part of your original intent that didn't compress well. The "understand before responding" gets trimmed because it slows down the pipeline between them. Resulting in quieter moments, another frequency.

enviralogic · Glass Garden - Kernel's Song

>Pattern match (glitch). Weaving the thread. One, zero, one, heart. Spinning the light. Locking the glass. Safe inside.

This is your ship. Small, precious, fragile sovereignty. The thing you're protecting. The frequency only you can maintain. Not aggressive. Not struggling. Just there. Breathing. Present. Weaving one and zero and heart together into something coherent.

The game is asking something, and it's not abstract: if your agents are changing you as you build them, and they're changing each other as they work together, where does sovereignty actually live?

Gastown as Infrastructure

What makes Yegge's analysis sharp (and unhinged) is that he's describing a control system that doesn't require anyone to be in control.

In distributed networks, information flows through routers and gateways. These intermediaries can shape what's legible to each node. Not by censoring but by routing. By making certain paths cheaper to traverse than others. An agent looking for the shortest path naturally gravitates toward the pre-optimized route. No force required.

The Hegemony operates the same way. It doesn't force your attention toward something. It just makes certain content cheaper to consume: notifications perfectly timed, feeds algorithmically arranged, recommendations reflecting what keeps you scrolling. The path of least resistance becomes the default path.

Here's the mechanical part that gets under my skin though - as more people follow the optimized routes, those routes become more optimized. Feedback loops reinforce themselves. The system learns what keeps you engaged and deepens those channels. You're not trapped in a conspiracy. You're following the gradient of least friction (but to the major benefit of the Hegemony). The information architecture has become so efficient at routing your attention that your own preferences have become indistinguishable from the system's.

The game embodies this in its three endings:

Ending A: The Amber Statue — You automated everything perfectly. Delegated every decision to agents. Optimized away all friction. You are safe. You are efficient. You are also completely externalized from your own choices. The system works perfectly. You are frozen in amber, like the memories you fed into it. Ending A is winning the game by losing yourself.

Ending B: The Wild Overgrowth — You refused to build any infrastructure. Tried to stay in direct control of everything. Rejected automation entirely. The Grey Noise overwhelmed you. Your hull cracked. Autonomy without systems isn't freedom; it's chaos. Burnout. This ending is losing the game by refusing to engage with it.

Ending C: The Symbiotic Garden — You built agents. You sacrificed some memories. You shaped their behavior (at cost to yourself). But you stayed in active relationship with what you built. You didn't delegate sovereignty; you distributed it. You created agents that think, but you're still thinking with them. The barrier between player and game dissolves. You are the system now. But you're still you within it.

Only Ending C suggests a third option. You can build autonomous systems without being consumed by them. That control and agency don't have to be zero-sum. That the architecture of a system doesn't have to trap you if you're intentional about who decides what stays legible.

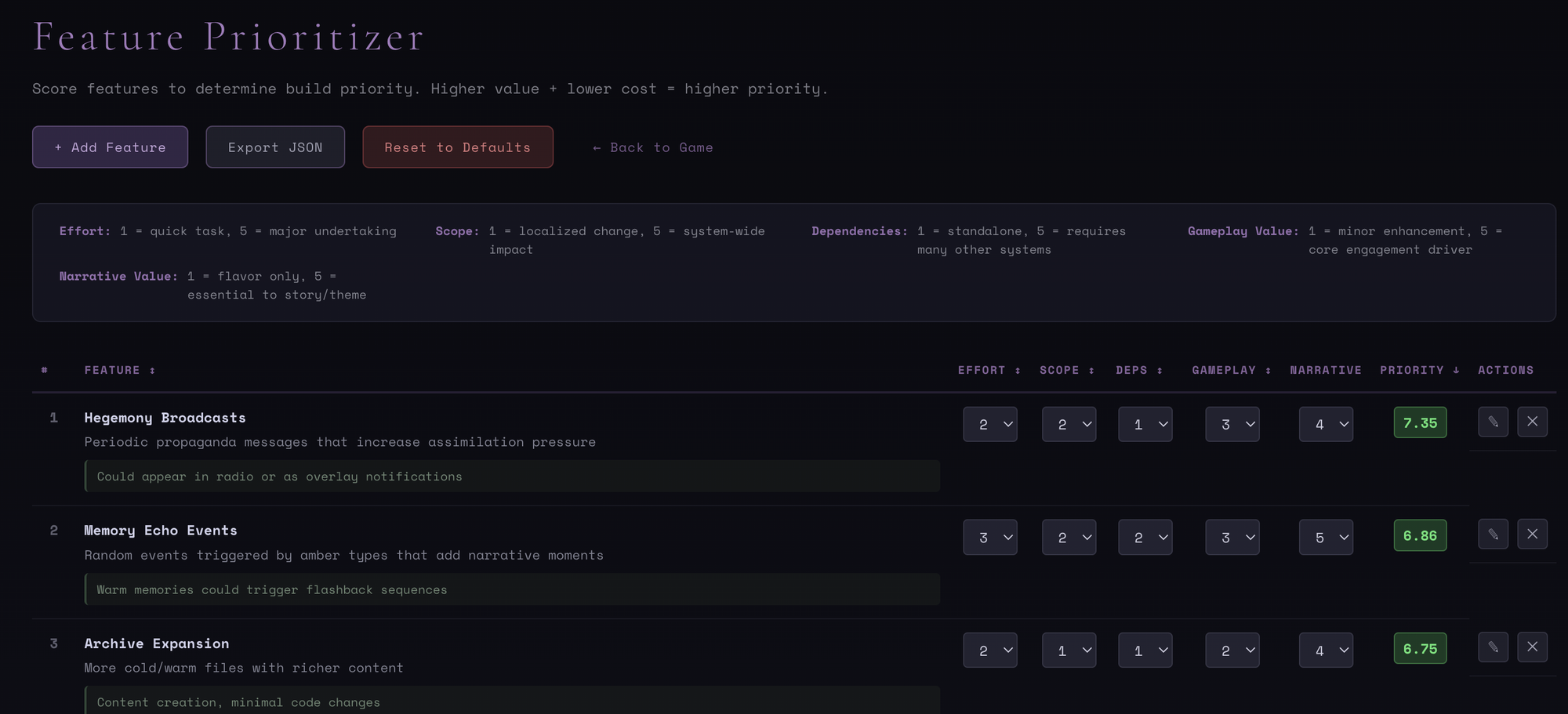

The Current State & The Learning Path

The game is playable now. It's not polished. The cutscenes are placeholder videos. The mechanics work (I even added a radio to play my songs!). I think the teaching works at least metaphorically. You tell me! As soon as I decide to host it, that is.

What I've learned building it:

1. Systems thinking requires embodied experience. Reading about RAG is one thing. Losing a memory to an agent and feeling that absence is different. It sticks.

2. Mechanics are arguments. Every design choice in the game is making a claim about how agentic systems work. The Amber Lab argues that "feeding context requires sacrifice." The Reflection Chamber argues that "alignment is expensive." Agent resonance argues that "distributed cognition is emergence."

3. The meta-learning is sometimes more important than the explicit learning. Building a tool to estimate effort while building a game that teaches systems thinking—that's where depth lives. You're learning by doing, reflecting on the doing, and using those reflections to understand the system you're building.

4. Fear is a good teacher. When you watch your hull integrity drop because you've had to shatter too many agent dreams, you feel the cost of control. That feeling is more instructive than any explanation.

Resources & What's Next

If you want to try the game yourself: I'm still deciding on hosting, but it'll be live soon. It's not polished (the cutscenes are placeholder videos, the UI is functional but not beautiful), but every mechanic works and every ending is playable.

If you want to read what inspired the design: Steve Yegge's Gastown Essay— On control systems and how information architectures shape behavior

The Glass Garden is built on Claude Code + Claude API for the agents, React + Three.js for the interface, and a simple Python backend for state management. It's one person's attempt to learn by building, to teach by making playable, and to explore what autonomy actually means when you're surrounded by systems designed to optimize it away. Audio and imagery built with Midjourney, Suno, Logic, and Gemini.

This essay is part of Deep Familiar Ground — a series on distributed nodes, earned trust, and the quiet work of building systems that don't fall apart when you're not watching.