Buried in Svalbard

Discovery

While assembling the professional works index for this very website, I found an Arctic Code Vault Contributor badge on my magicpia GitHub profile. Earned February 2, 2020. I had no memory of it.

The vault is a decommissioned coal mine inside a mountain in Svalbard, Norway, hundreds of meters into permafrost. GitHub deposited 21 terabytes of active public repository data there in February 2020, photographed onto archival film rated for 500 years, with a stated preservation goal of 1,000 years. The guide they included is addressed to no one in particular: "whoever finds this." An acknowledgment that the intended reader is genuinely unknown, someone centuries out with no context for what a repository is or why it was worth keeping.

My daughter is named after Lyra Belacqua, who travels to Svalbard in His Dark Materials looking for something that was taken and hidden away. That's probably a coincidence. Probably.

Background

Contributors whose code was captured in the February 2, 2020 snapshot received the Arctic Code Vault Contributor badge, a one-time achievement tied to that specific deposit. The deposit was not opt-in. GitHub photographed active public repositories and attached the badge retroactively. The badge was retired - no one else will earn this badge.

Archived repositories from magicpia include magic-network/magic-protocol, magic-network/magic-cli, and magic-network/magic-agent.

What the Magic Repositories Were

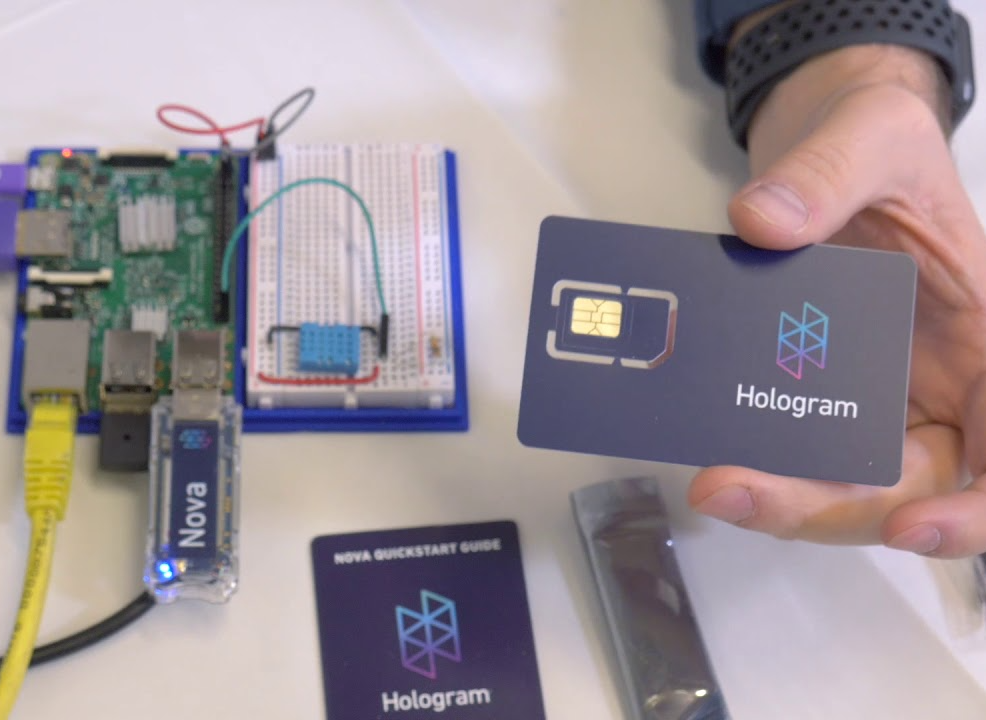

Magic was an internal R&D project incubated at Hologram Inc. during 2018-2019. Hologram's core business is cellular IoT connectivity: SIM cards, device management, data pipelines for connected hardware. The Magic effort asked whether that technical foundation could support something more ambitious: a next-generation internet service protocol built on decentralized infrastructure.

The concept was a distributed mesh of internet gateways, operated by both individual contributors and established carriers, routing traffic without the chokepoints of traditional ISP architecture. No data caps. No throttling. No carrier-level data mining. Anyone with compatible off-the-shelf hardware could participate as a gateway and earn token-based incentives for verified service delivery.

The three archived repositories represent the core technical layers of that system:

magic-network/magic-protocol The foundational layer. Defined proof-of-availability (verifying that a gateway was reachable and operational) and proof-of-transport (verifying that a gateway was actually routing traffic, not just claiming availability). Token rewards were tied to proof-of-transport, not just presence on the network. This is structurally similar to proof-of-work in distributed ledger systems, but the work being proven is network utility rather than computation.

magic-network/magic-agent Agent software for gateway nodes. Responsible for broadcasting availability, handling routing requests, and reporting transport metrics back to the protocol layer for incentive settlement.

magic-network/magic-cli Command-line tooling for interacting with the Magic network: configuring gateways, querying network state, managing credentials. The operator interface.

Magic reached alpha stage, then was retired. Hologram's resource allocation remained focused on cellular IoT connectivity (as it should have), and the realization of the economics of bootstrapping a global decentralized ISP alternative from scratch really sunk in for the team. The repositories were archived before the project was formally wound down.

The 0.2 release notes from January 2019 give a snapshot of where the project was mid-alpha: Linux support added alongside MacOS, Python 2.7 dropped in favor of 3.5+, a re-issued certificate for `magic-agent` that had been causing authentication failures across gateway installs. The next planned feature was an early payments implementation on Ethereum's Rinkeby Testnet: the token incentive layer being built out in real time, not just theorized in a whitepaper.

Field Notes from the Alpha

My role on Magic was product lead, not core engineering. In practice that meant updating tech docs, fixing config files, and spending a lot of time at whiteboards unpacking how Merkle trees and asymmetric cryptography connected with packet transfers. Heady sessions. The kind where you leave knowing more than when you walked in, but also more aware of how much you don't know yet.

The harder problem was structural. A decentralized network is a marketplace, and what I came to discover later was coined as the Cold Start Problem by Andrew Chen: you can't attract demand when there's no supply, and you can't attract supply when there's no demand. Chen's framing identifies a "hard side" of any network. Establishing the contributors who do the most work to make it valuable require the most effort to recruit. For Magic, the hard side was unambiguously node operators. Coverage had to exist before anyone would trust the network with real traffic. So the order of operations was supply first: build the community of first nodes, prove the protocol works in the wild, then let demand follow.

Some of what that looked like in practice:

Early signal testing happened in the neighborhood around the Hologram office. Chicago's downtown cellular infrastructure is dense, carriers stacking antennas on top of each other competing for LaSalle Street coverage. The head of engineering upstairs; me walking the block below, watching whether the connection held. It did.

We demoed the network to an innovation lab at a major carrier. They weren't fans of the premise. A decentralized ISP that routes around carrier chokepoints is not a pitch that lands well with carriers. We tried the partnership route anyway.

The launch came at one of what felt like a thousand blockchain conferences in San Francisco that year. We had Mikrotik routers pre-configured with magic-agent and worked through metering bugs on the way.

Recruiting early nodes for a decentralized network requires a different approach than a standard alpha launch. The audience isn't early adopters in the conventional sense. It's people who already believe the internet access model is broken: Reddit communities organized around mesh networking, Meshtastic users building off-grid communication infrastructure, networking hobbyists who had been thinking about this longer than we had. Think the Brian Gragg recruitment model from Daemon: find the people who are already working toward the same thing, offer them a better protocol.

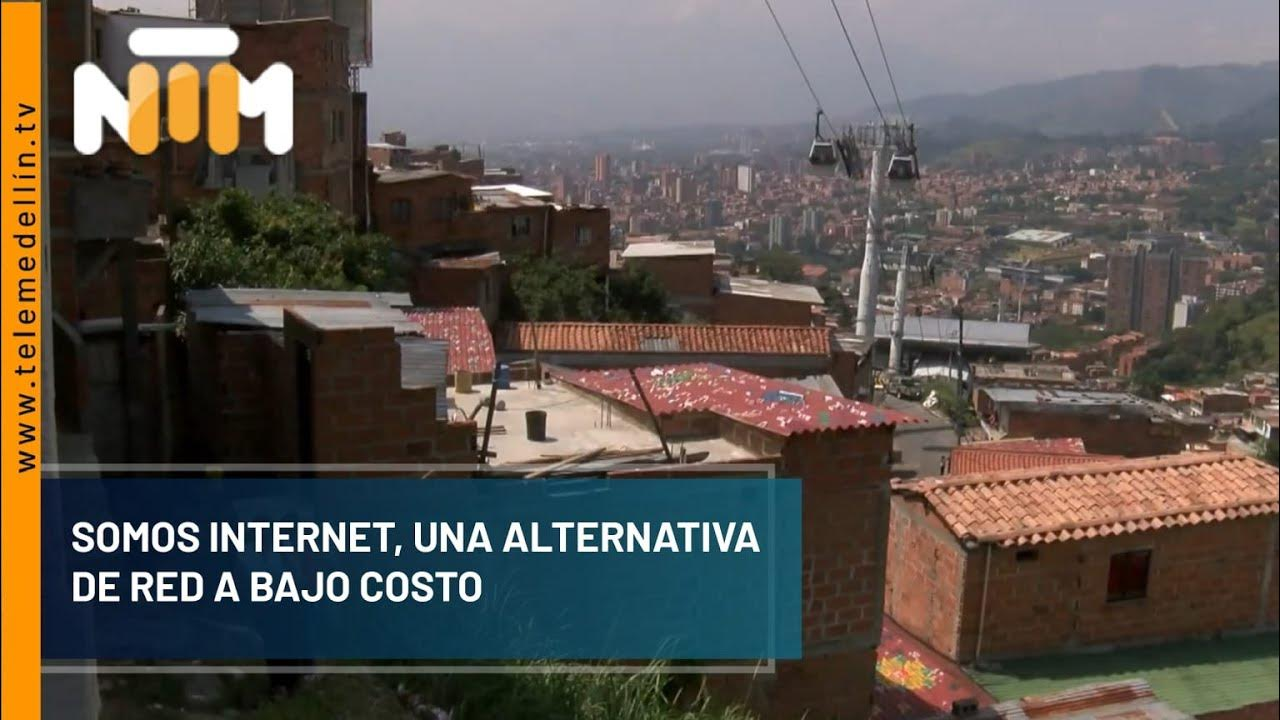

One of the first global nodes came from Medellín. Somos Internet was building community-rooted internet infrastructure as an alternative to incumbent carriers, the same adversarial premise at a different layer of the stack. The conversations were a delight.

Their self-assembled repeaters and signal boosters worked best from the roof of a school at the highest point in their neighborhood. Coverage radiated out from there into the surrounding streets. Neighborhood residents, regular people following installation manuals and basic setup guides, became successful nodes. They ran with it.

That's the proof of concept you can't get from a controlled environment. Not engineers in an office. A person on a rooftop in Colombia with a homemade repeater, connected to a network that two years later would be sealed in permafrost.

Medellín was one node. We were building toward hundreds. Each new install brought a different hardware setup, a different skill level, a different failure mode. 200+ in total. Each fix unlocked the next problem. Debugging across time zones. Downtime that didn't matter yet because five-nines uptime isn't the goal in an alpha; learning what breaks is. The frenetic speed-learning of an entirely new domain while simultaneously trying to hold the community together.

The methodology, blockchain incentives for decentralized infrastructure, has since cycled through hype and skepticism in about the way you'd expect. But the underlying premise was sound: the internet doesn't have to be organized the way it currently is, and the people most motivated to change it will find each other. One node at a time.

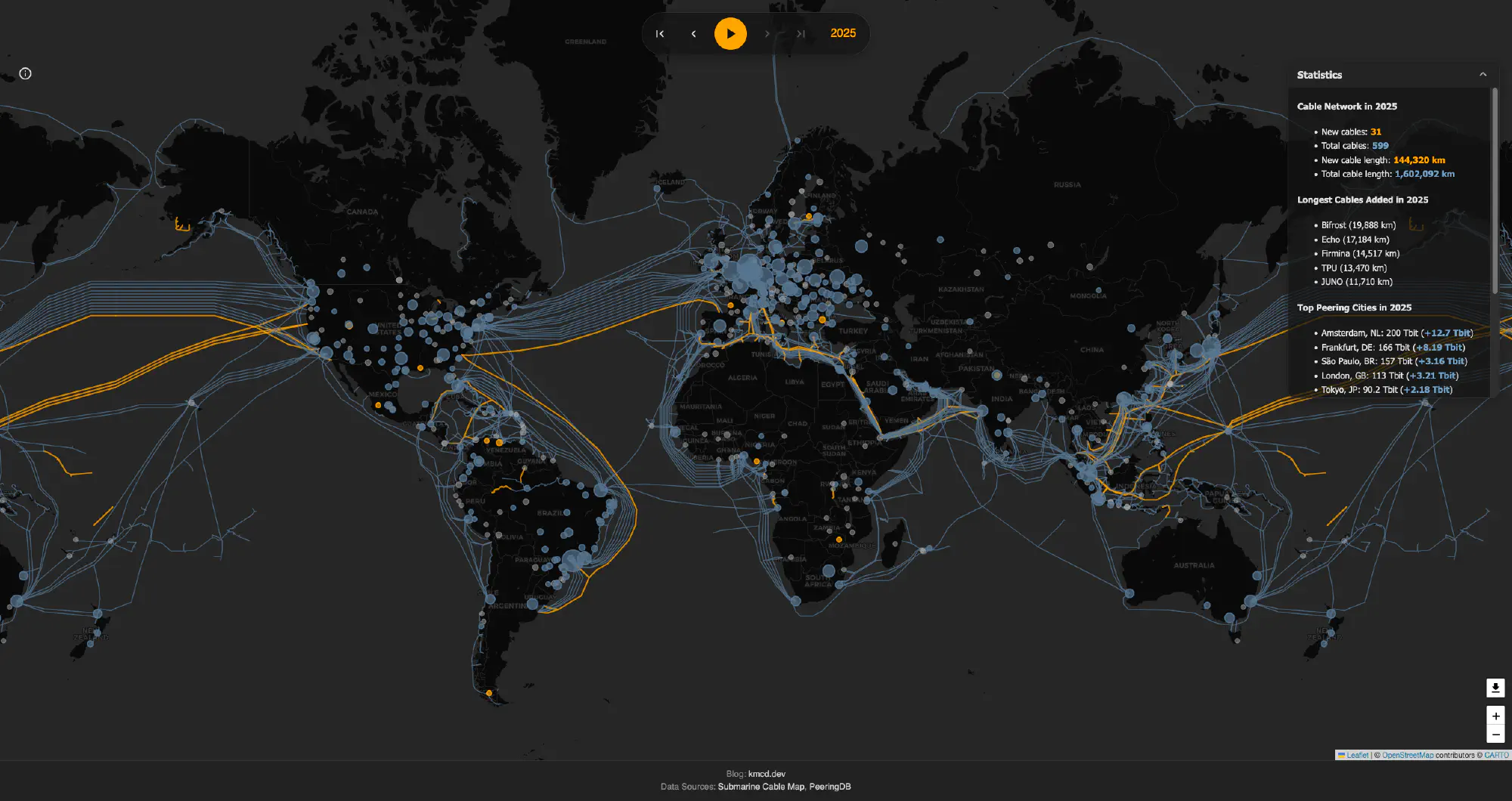

Distributed Mesh vs. Hub-and-Spoke

Traditional ISP architecture is hub-and-spoke by design. Traffic flows through a small number of carrier-controlled chokepoints: backbone nodes, regional exchanges, last-mile infrastructure owned by a handful of entities per market. This architecture is efficient to operate, easy to monitor, and straightforward to monetize. It is also straightforward to surveil, throttle, and attack at the chokepoints.

The mesh is the adversarial alternative. Every node is a potential gateway. Traffic routes around failures and congestion dynamically. No single entity controls access.

The coordination cost is where most decentralized network projects fail or stall. A hub-and-spoke operator can make unilateral decisions about routing, capacity, and pricing. A mesh requires a protocol that all participants run, with no central authority to resolve disputes or enforce behavior. Proof-of-availability and proof-of-transport are attempts to solve the honest-reporting problem without a trusted intermediary: the network itself verifies the claim.

This design pattern has surfaced in adjacent projects. Meshtastic uses LoRa radio to build off-grid mesh networks for communication without internet infrastructure. Helium built a decentralized wireless network with token incentives for coverage providers. Both encountered the same coordination problem Magic was attempting to solve at the protocol layer.

Why the Protocol Layer Outlasts the Product

Magic did not survive as a commercial product. The repositories were retired and the project was not continued. But the repositories are now in the Arctic because they were public, active, and real enough to be captured in the February 2020 snapshot.

The product lifecycle and the protocol lifecycle are different things. A product can be retired when it fails to achieve commercial viability. The protocol it encoded, the specific technical decisions about how to verify availability, settle incentives, and route traffic in a trustless mesh, those decisions are now part of the public record for 1,000 years.

The problem Magic was designed to solve has not been solved. ISP architecture remains centralized. Carrier data practices remain the same. The chokepoints are still there. Someone building a decentralized internet access layer in 2040 or 2080 or 2120 will be working on the same problem. Whether or not they ever look at a coal mine in Svalbard, the problem shapes the solution space the same way.

Protocol work is documentation of the problem as much as it is a proposed solution. That's what gets preserved.

Connection to Adjacent Work in This Series

The repositories archived in 2020 predate the vocabulary that would later describe them. `magic-agent` is an agent layer for a distributed network, written before "agentic" entered mainstream technical discourse. `magic-protocol` is a trustless coordination layer for a mesh, written before "trustless coordination" became standard language in distributed systems conversations.

Looking across the other systems documented in this essay series I see a common structure across all four: distributed nodes, shared protocol, no central authority required for the system to function. The specific domain changes. The structural bet is the same.

It would be tidy to claim this was intentional. It wasn't. But it's legible now in a way it wasn't from inside any single project, and that's worth noting as a property of writing things down.

Notes on the Arctic Vault Itself

The deposit used photosensitive archival film rather than digital storage, specifically to avoid dependence on future hardware compatibility. A reasonable bet when the goal is 1,000 years and you can't know what hardware survives.

The 21 terabytes include contributions from millions of developers across thousands of projects. Three of those repositories are from a nine-month R&D effort at a cellular IoT company in Chicago that was trying to figure out whether the internet could be organized differently.

This essay is part of Deep Familiar Ground — a series on distributed nodes, earned trust, and the quiet work of building systems that don't fall apart when you're not watching.