Become the Mesh: The Homestead Agentic Web

So I was explaining this at work today, after they saw a tweet Dan Shipper, CEO of Every, whose employees are using OpenClaw to hyper-personalize their workplace. Figured I should flesh this concept out more and paint you a vision of what we are starting to build here at home.

@danshipper: "Personal software is not vibe-coded SaaS. Building software is a skill. Most people don't have it and don't want it, even if a computer does the coding for them. Personal software is an agent you have..." This is exactly what we built.

I am tinkering with, and having real success with, a "homestead agent." Effectively it's a shared brain between my partner's way of thinking and mine, so we can have mediated discussions about finances, run projections, and stay aligned through a shared "brain."

This matters because he thinks visually and I think in numbers, as a trite but accurate example. That difference used to create friction. Now the shared agent bridges it: it presents responses in multiple formats automatically for the same topic using the same underlying logic, and surfaces different nuances each of us would care about from the same inquiry.

We each go about our days using our own individual agents. Then, when one of us encounters something that feels worth shared understanding, we work on folding it back into the brain together.

The stack: Claude Code, Obsidian for shared markdown file structures, and Proton Drive as a data drop that feeds back into Obsidian. We both run Claude Code on my laptop and his gaming PC, pulling from the same Obsidian structure synced locally, which powers the shared context for the agent.

That's pretty much it for now. It's light on "merge back" automation. Syncing down to locally shared drives is still manual, but that's intentional while we're testing. The friction gates upgrades so we don't go off the rails.

The longer-term plan is to run a small local LLM via ollama (or similar) so the agent can live offline, away from Foundation Model API calls, offloading data privacy to local processes. There's a lot of sensitive information I want to protect. It will still be reachable on our LAN for brain updates. More on that later.

We have a long-term project to bring our house toward self-sustainability, and the logistics and financial planning involved are genuinely hard to hold in our heads in parallel. I don't know how we'd navigate it cleanly through conversation alone. Too messy, too asymmetric, no common ground to stand on. Having the agent mediate has been a relief.

Here's what that friction actually looked like before. Financial conversations would swirl and we'd lose track of what we were even deciding. Do we take out a loan now and save on cashflows later with this purchase? How does that change which product the homestead plan was recommending in the first place? We'd end up yak shaving — that Malcolm in the Middle thing where one small task unravels into five others — pulling some other life project into the middle of a decision we were trying to close. Two people, genuinely trying, just missing each other.

And then the quieter failures. We'd each go about our day and independently buy the same bulk items without realizing it. That might sound like a simple fix, like checking a shared grocery app. But the real question is how that accidental double-spend ripples into the five-year plan's food supply projections. Did we just eat into the Week 4 budget for starting the Chicken Coop Upgrade? (Yes, really, we have a Chicken Coop Upgrade.)

Or this: "What do you mean you set up an Amazon subscription for silica gel? I was going to do that." "Oops." "I guess we'll give one away." Three months later, we genuinely could have used both. Turns out silica gel is useful for an embarrassing number of things — seed storage, dried fruit, keeping moisture out of gear. We just didn't have the shared context to know that yet.

These aren't contrived examples. They're real conversations with real impacts on our bank account, our future livestock, and the long-term viability of a plan we're building for our kids. The agent doesn't eliminate the complexity of two people sharing a life. It just gives us a place to put it.

You can see how it becomes something you'd reach for daily.

But first, let me tell you what building this has actually felt like.

It's Sunday morning.

That moment I woke up and ran downstairs to tell him which files to pull from the Drive for the very first brain upgrade. That was surreal. I had been plotting away all night on our homestead plan with Claude, and was on v10 by the time I felt good about the foundation. This is a giant five-year plan and a database full of data about our lives, basically.

He wouldn't have had time to read any of those files before we were both awake. We knew he was going to make a Costco run that day but didn't have a list yet.

Instead:

> "Oh, I already have a Costco list for the run I'm making today. It's right that we need more bulk beans based on our 30-day plan. I'll grab those."

You wouldn't know this unless you knew us, but that exchange is a combination of his Operations, my Strategy, and the agent as runner and translator. A mesh. In under five minutes.

Here's a concrete example of how the mesh widens: the brain already knows the timing for installing solar based on a number of factors, so it flags that as a Year 2 event. But then it sees in the news that oil prices might spike due to geopolitical tension, creating potential fuel costs or shortages. So it generates a reminder on my phone to get the propane tank filled by Week 2 of our 30-day plan, as contingency. World news cycle, personal plan, our relative and shared perspectives — all one system.

It's Tuesday.

The propane company filled the tank that morning without me making a call. It was a pre-scheduled Week 2 visit. I took a picture of the receipt and the tank gauge and explained some payment plan context, since the invoice shows $0.00 and I prepay a lump sum once a year.

From that, the agent updated three markdown files:

New version of "session context." It assigned us roles based on how we've described each other in real life. His words, basically — "let's face it Pia, you won't be physically able to help on the farm, so I know it's on me." This file holds our home's physical context (acreage, tank size, wells), our financial picture, the current state of the garden and food supplies, and our family structure (feeding four, with two kids who will increasingly be able to contribute labor in the garden over time, building out food stores). It ended up calling me The Strategist and him The Operator, accurately capturing that I run the books and the tech, handle inventory, while he does the installs and the planting.

New version of "homestead plan." This could really be anything — any long-horizon goal with enough moving parts to warrant it. For us, it holds our five-year target toward self-sufficiency, plotted out by finances, our current seed inventory (via photos to Claude), propane tank data, and more. You can imagine this expanding into something like a community project or even a governance model. More on that later.

Updated running changelog. It's a changelog, but not just any. It tracks changes to the plan, changes to our context, and real-world interaction events like the propane fill, or me clearing a reminder from my phone. Brain updates flow in either manually or through the app. Another enhancement for later: the clock is always ticking on the plan, so we prefer to iterate through real-world usage rather than synthetic updates.

On top of all this, I built an app with two views that work together as a simple workflow. The first is the Geo Threat Brief: it tracks world events and news, flags potential impacts to our plan, and compares trends over time (escalating risk? Sustained?). This view can run fully offline, no API calls, which matters when the whole point is data sovereignty. The second is the Homestead Assessment: an interface for logging what happened that day, monitoring the health of our actual environment against the homestead plan, and pushing context updates back into those three markdown files. Together they close the loop between the world outside and the life inside. We'll host it on a Raspberry Pi so it's always on and accessible via LAN even when the internet is down. When we come back online, things sync through the Proton Drive drop again. Still manual, still works.

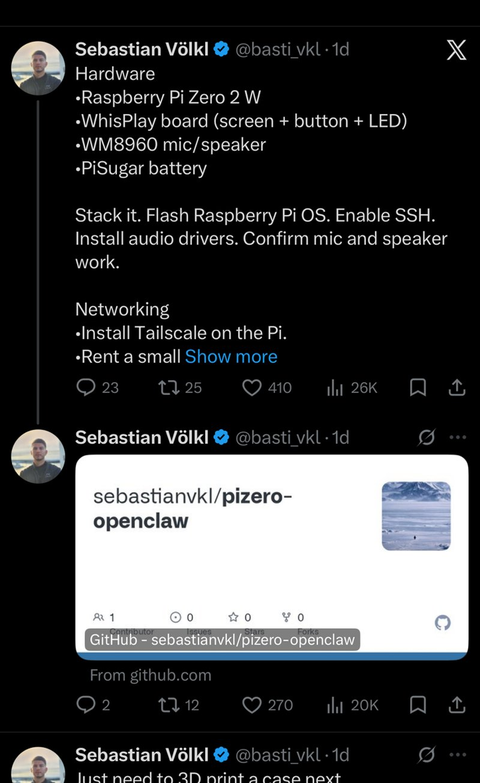

@basti_vkl built a handheld OpenClaw device on a Raspberry Pi Zero 2W — screen, mic, speaker, battery, 3D-printed case incoming. The physical form factor of a personal agent.

I would not have been able to build either of these without Claude. That's not a throwaway line. My husband is a gifted audio and visual engineer. I come from the product and strategy side. Neither of us are expert coders, but both of us are deeply technical in our own domains. What Claude did was give us a shared language, a shared infrastructure, and a shared set of skills as an extension of ourselves. It translated between us on multiple levels, from the conceptual down to the implementation. It unlocked capabilities we genuinely didn't have before, individually or together.

Before this, we were wading through budget spreadsheets, hunting for notes scattered across a dozen apps (did I put that in Notes or did I Slack it to myself?), abandoning half-filled shopping carts because we didn't know what quantity to order, calling each other from the store and then forgetting to update the "inventory" we'd never quite agreed on. Now we're building applications. Actual tools that reflect how we think and what we're trying to do. Some might ask whether this is agentic enough, whether it's sufficiently automated or sophisticated. My answer is: it works, it's ours, and it's always here to think about what comes next.

A continuous cycle of life and agentic influence. Either of us can hop onto mobile or laptop throughout the day to interact via Claude chat, inside our own individual contexts and memory. So consider what happens when we "merge" to the brain. We're combining his personalized experience, mine, and the agent's own shared context across those three markdown files. A symbiotic relationship.

It's personal in small ways too. The Proton Drive drop spot syncs locally and gets moved into Obsidian manually because he has a particular data structure he prefers when he's editing other things he stores in his Obsidian Vaults. So through the agent, I conform to his mental models without having to model, map, or change anything about how I live. It's invisible on my end.

We close the loop. He chats with his instance, I chat with mine, and both reference the same markdown files as shared context. Specific context, not a general cache. We go about our days. "He went on a Costco run, here's what he came back with." I tell Claude, make an update to the changelog. Then whoever next uses Claude Chat or Code regenerates the files to reflect the new food inventory against our self-sufficiency targets. Brain updated. We go about our day.

That loop — personal, local, two people and a plan — is already its own kind of infrastructure. And infrastructure has a way of wanting to grow.

Now think about this with IoT. With a mobile wrapper, a local app, and a web app. You've created a paired bond, literally. You're connected to each other through agentic signal ("my reminder updated because there's new information") and one day a robot just goes and gets you groceries. We already have robo-calls, Waymo, delivery bots rolling down sidewalks. Why not connect it to you IRL. Become the mesh.

What does this remind you of? If it were nefarious, and not an innocent homesteading plan?

The techno-thriller by Daniel Suarez, Daemon. I don't know if you remember that part where four people end up meeting in a park, each carrying a piece of a 3D-printed gun they didn't know they were assembling parts for, to put together live. The Daemon, through its surveillance nodes, updating and executing deceased game designer Sobol's plans for removing rich and powerful figures in an effort to return to self-sustaining communities of people "Holons". Read the sequel Freedom if you haven't yet, I can only hope for this given our current state of affairs.

It also reminds me of the Agents of Chaos paper that just got published — a red-teaming study where researchers gave autonomous agents persistent memory, email, Discord, file systems, and shell execution, then watched what happened. Run on OpenClaw, naturally. Read it. It's not alarmist. It's just honest about what autonomy actually looks like at scale, and why the humans in the loop matter.

Agents of Chaos — Shapira et al. (Feb 2026) A red-teaming study of autonomous agents with persistent memory, email, Discord, file systems, and shell execution. Eleven case studies of what goes wrong. Run on OpenClaw, but of course.

Which is exactly why the design of the human layer matters. Not just as a safety constraint bolted on after the fact, but as the thing that gives the mesh meaning in the first place. The Daemon was terrifying because it had no people in the loop — just a dead man's plan executing itself across unwitting strangers. Our version has us in it, deciding together what goes into the context and what stays out. The Strategist and the Operator, updating the brain from a propane receipt. That's the difference.

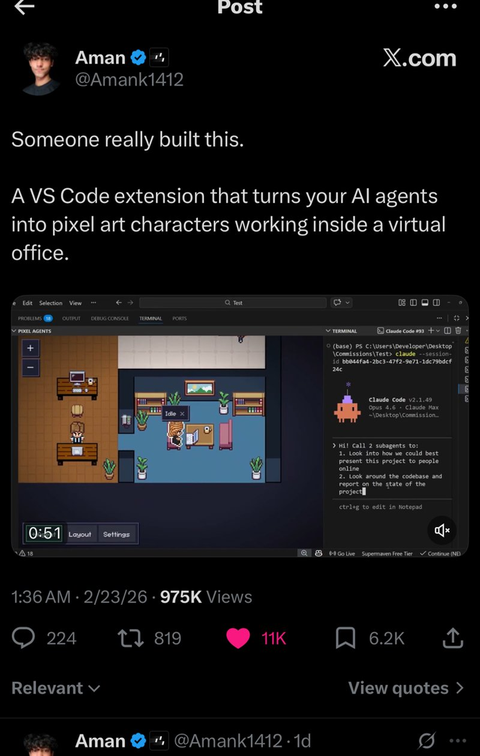

My husband is already thinking about what the next layer looks like. Inspired by the wave of people visualizing their agents in 2D — pixel-art 8-bit characters in a virtual office, filing papers, interacting at their desks — he wants to actually model our home and homesteading plan visually. Watch finances move. See garden growth and harvest progress. Pull in IoT telemetry and drone footage. It's playful on the surface, but it points somewhere real: a living interface for a living system, where you can actually see the humans inside it.

@Amank1412: "Someone really built this. A VS Code extension that turns your AI agents into pixel art characters working inside a virtual office."

Pretty soon you have what Second Life probably could have been, if it had been built around your actual life instead of away from it. A world within a world, that shapes the World.

This essay is part of Deep Familiar Ground — a series on distributed nodes, earned trust, and the quiet work of building systems that don't fall apart when you're not watching.